Dual-sensor phone cameras: Are they any good?

Making sense of multi-sensor cameras on the LG G5, Huawei P9 and more

Whenever we’re asked for phone advice, it’s often appended with “and is the camera any good?” Camera tech terms can be some of the most impenetrable, ripe for marketing people to use to bludgeon you into buying a phone.

One of the more recent techniques is to use multi-sensor, multi-lens camera setups to dazzle us into thinking phone is a “next-gen, iPhone 6S-killing game-changer”. Enough to make you want to groan, isn’t it?

However, some multi-sensor setups have the potential to radically change how you approach your phone camera. Who’s starting to sound like the marketeer now? Let’s take a look at the fails and (potential) winners in this area, and why multi-sensor setups are probably the future of phone photography.

WATCH: Check out our hands-on of the Huawei P9

Huawei P9 and P9 Plus

Type: Two separate sensors. One RBG and one monochrome. Engineered by Leica.

Huawei has done something rather special with its latest phone. By teaming up with Leica for the P9, the Chinese brand has instantly given itself more camera cred and it really shows. The dual-sensor set-up here is different to any of the others below because both sensors are 12-megapixels. That’s not all, as one is a dedicated monochrome sensor while the other is the more conventional colour version.

This is great for a couple of reasons: one being you can shoot actual black and white images that look really, really, good and the other being an improvement in low-light performance. The monochrome lens lets in 300% more light than the RGB one and this gives you much brighter night-time shots. Check out the samples below.

Related: Huawei P9 vs P9 Plus: What’s the difference? An example of a pure black and white shot, unedited, taken with the Huawei P9

An example of a pure black and white shot, unedited, taken with the Huawei P9

This shows the impressive low-light ability of the Leica engineered camera. You can still pick out facial features, the sun doesn’t overexpose the shot and there’s so miuch detail packed in.

This shows the impressive low-light ability of the Leica engineered camera. You can still pick out facial features, the sun doesn’t overexpose the shot and there’s so miuch detail packed in.

The sensors also let you achieve some nice looking Bokeh effects. This gives the blurry background look, with the foreground object in focus.

The sensors also let you achieve some nice looking Bokeh effects. This gives the blurry background look, with the foreground object in focus.

LG G5

Type: wide-angle GoPro wannabe

The latest multi-sensor camera phone is the LG G5, which is the most ambitious (or at least the most out-there) flagship we’ve seen in years. It lets you plug-in modules at its bottom, but the important camera hardware I’m talking about is hardwired into the main part of the phone.

Watch our LG G5 hands-on video

Take a look at the back. On the right you get a 16-megapixel much like the one of the LG G4, which was one of the best of 2015.

On the left is a wide-angle lens. It has a 135-degree field of view, which is similar to the ‘Medium’ FoV setting of a GoPro. It gets you the action cam effect, basically, offering a wider view of the world than we consciously perceive.

Related: LG G5 vs Samsung Galaxy S7

This is why fisheye-effect photos look a bit odd, because they squeeze the detail from a very wide view of a scene into a more conventional frame by distorting the image. So, point one for the LG G5 is that it can work as an action cam. That’s pretty neat.

It goes further, though. Using clever processing the LG G5 can merge the results from the two cameras to let you zoom out to 0.5x magnification while shooting normally. It’s like a reverse digital zoom (but not rubbish) and could prove very useful for framing those shots where you just can’t get quite far away enough. We’ve only briefly tried this out ourselves, but if LG can maintain the 16-megapixel quality of the images in the centre of the frame without causing any distortion joins around the edges, it could be a winner.

This is almost certainly the most promising fully-realised multi-cam phone yet.

HTC One M8

Type: parallax errors

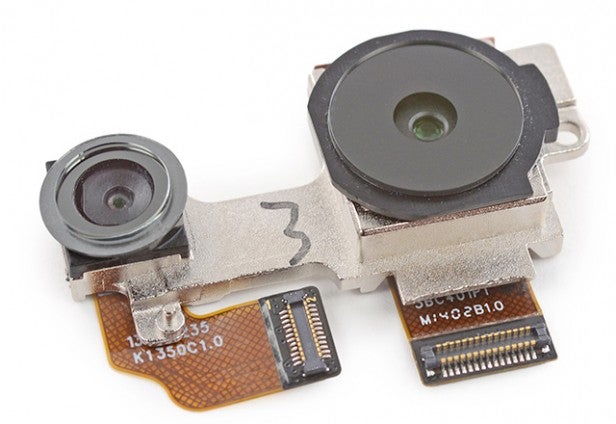

Perhaps the first phone to bring dual-camera setups to the attention of the average phone buyer was the HTC One M8. It has two cameras on the back, one in the normal spot and another above, right near the top of the phone.

The idea here is that the pair works a little like our eyes, analysing the differences in what each camera sees, using them to tell between near and far-away images. It’s parallax at work.

These two camera sensors are not remotely the same, though. The main image data sensor is the one below (right in the image), while the one at the top is a much lower-quality sensor whose sole job is to offer this depth data. It’s playing a game of spot the difference.

Related: Samsung Galaxy S7 vs S6

In action, the results of this sort of setup are almost always patchy, especially when trying to separate foreground and background in irregular-shaped objects. The same idea was used all the way back in 2011 with the HTC Evo 3D, a US phone. It was swiftly dropped then too. HTC can’t stay away from it for too long, though, having used the same technique in the HTC One M9 Plus.

You usually end up with artefact-laden photos, and the whole thing tends to feel like a bit of a gimmick. What makes it worse is that you can only really see the results by turning the phone side to side or applying filters (colour pop, background blur) that highlight the process’s shortcomings. They don’t have 3D screens, after all.

But wait, the LG Optimus 3D (2011) had both a 3D screen and a 3D camera, yet was still a flop, or at least not much more than an experiment. That should tell you how limited the appeal of this sort of setup is.

Amazon Fire Phone

Type: 3D interface embarrassment

Anyone remember the Amazon Fire Phone? Almost no-one liked it. Almost no-one bought one. It had the most gimmicky approach to using 3D cameras we’ve ever seen in a phone. This is one to put in the ‘Do not recycle’ bin.

It uses four front cameras that aren’t used to take pictures at all. Instead they’re used to track your face to provide a dynamic ‘pseudo 3D’ interface. What this means is that the 3D-modelled interface elements tilt a bit as you moved your head to the left or right, up or down.

You could argue it doesn’t really belong in this list at all as the cameras it uses to take photos on the front and back and separate and entirely conventional. But you can’t go through the history of multi-camera use in phones without mentioning this little guy.

We actually ended up switching off this ‘Dynamic Perspective’ feature towards the end of testing the phone. That’s how useful it is.

The future: Light and Corephotonics

Type: Computational photography

To date our experiences with multi-camera setups have been pretty bad. Some have been plenty of fun to play with, but I can’t imagine using any of them once that first-week excitement wears off.

The LG G5 may change that to an extent. Wide-angle photos and video will make for a great, and much quicker, alternative to a panorama. Some professional photographers spend thousands of pounds on wide-angle lenses, after all.

The multi-sensor cameras that are going to change phone photography is a more important way are yet to come, though.

They can be used to mimic optical zoom functionality much better than digital zoom ever could, and to radically reduce noise (and therefore increase low-light flexibility). Multiple focal lengths can be used on the same plane so that when you zoom you only need to bridge the gap between them digitally. And multiple sensors with the same focal length can be used to improve performance at higher (low-light) sensitivities.

One company maxing-out these ideas is Light. It’s working on an Android-based camera called the Light L16. We talked to them last year, and while they are currently only working on a standalone camera, they talked about how this sort of computation photography was the future for phones.

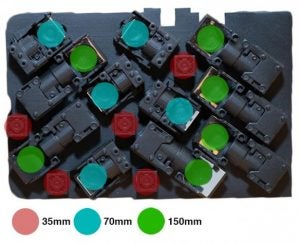

The Light L16 has a whopping 16 different sensors on its front, with three groups at 35mm, 75mm and 150mm focal lengths. This lets you zoom smoothly up to 150mm, and having multiple sensors at these lengths will get you great high-ISO performance compared to a regular phone.

16 cameras on a phone? Yes, that’s not happening any time soon.

However, a company called Corephotonics has already made multiple sensors based on similar ideals. At MWC 2016 it showed off a sensor design that offers ‘5x optical zoom’, called Hawkeye.

Calling this true optical zoom is potentially misleading, as what it actually involves is a pair of prime lenses whose output is ‘merged’ to offer the impression of a smooth optical zoom. In a normal camera that involves a whole bunch of lens elements moving back and forwards. There’s no room for that here: the whole reason for computational photography’s existence in a phrase.

The difficulty here comes when you’re half-way between the 1x and 5x zoom spots, where the field is only partly covered by what the effective 5x (Corephotonics told us it’s not exactly a 5x lens optically) lens sees, but it only accounts for a fraction of the 1x lens’s view. That’s where some real smarts are needed to avoid the outer part of you image looking flat-out blurry. “Software is used to create the smooth transition so that intermediate magnifications advance smoothly utilising the maximal amount of information from both sensors,” Corephotonics told us.

If you’re not interested in zooming, Corephotonics has also makes a sensor array with one colour 13-megapixel sensor and one 13-megapixel B&W one, whose data is then merged to produce one ultra-low-noise 21-megapixel photo. While 5x optical zoom cameras are likely to win more column inches, it’s tech like this that is more important to the development of phone cameras.

We don’t know about you but we’ve thought “We wish our phone’s night photos weren’t crap” more often than we’ve wished for a zoom lens. Corephotonics predicts phones using its technology will arrive in the second half of 2016, with 5x zoom models likely in 2017.

Are multi-sensor cameras the future?

Multi-sensor phone cameras have had a bad start. But they are the future, almost certainly. Optimising a sensor’s architecture can only get you so far, and the chances of high-end phones getting much larger in order to fit in, say, a 1-inch sensor, are almost nil.

The LG G5 and Huawei P9 shows us the beginning of a turnaround for multi-camera phones. Sure, its GoPro style antics are a bit trendy, but seem far more useful that what you get in, for example, the HTC One M8. Apple bought computation photography company LinX in 2015 so, who knows, there might even be some of this tech in the iPhone 7.

Further reporting by Max Parker