Nvidia GeForce GTX 1080 Review

Nvidia GeForce GTX 1080 Review

Updated: 1080 Ti changes the game, and there's a price drop incoming

Sections

- Page 1 Nvidia GeForce GTX 1080 Review

- Page 2 Performance, benchmarks and conclusion Review

Verdict

Pros

- Fantastic performance

- Great design

- Highly overclockable

Cons

- Doesn't hit 60fps in our 4K benchmarks

- More expensive than previous generations

Key Specifications

- Review Price: £620.00

- New Pascal architecture

- 8GB GDDR5X memory

- 2560 CUDA cores

- 1607MHz base clock speed

- 320GB/s memory bandwidth

Read our original review from 2016 below

What is the Nvidia GeForce GTX 1080?

The GTX 1080 is Nvidia’s latest top-end graphics card, ready to take on this year’s two-pronged assault of VR and 4K gaming. Its performance this year makes it by far the most powerful consumer level graphics card (ignoring the outrageous Titan X), and netted it our Graphics Card of the Year award at our 2016 Trusted Reviews Awards ceremony.

If you’re wondering why Nvidia’s made such an enormous song and dance out of a consumer graphics card launch, it’s because the company spent ‘billions’ on getting its new architecture to market and now has to make the numbers add up. Both financially and in GPU performance.

While the performance of the GTX 1080 isn’t in doubt, you definitely don’t need to spend the £600+ on it if your ambitions for playing games don’t extend to the world of 4K and VR.

Video: Nvidia GeForce GTX 1080 review

Related: Best graphics cards to buy

Nvidia GeForce GTX 1080 – Specs & Technology Explained

To explain why the new GTX 1080 is supposed to be so powerful, we have to talk tech and the GTX 1080 specs for a few paragraphs. If that’s not to your tastes, you can skip to our benchmarking tests on page two of this review.

Still with me? Good. Here comes the tech…

To boost performance and efficiency on the hardware side, Nvidia has moved on from its previous architecture, Maxwell, and introduced a new technique called Pascal. The key feature of Pascal is that it uses a smaller manufacturing process (16 nanometres versus 28nm), which means a greater number of transistors on any given piece of silicon.

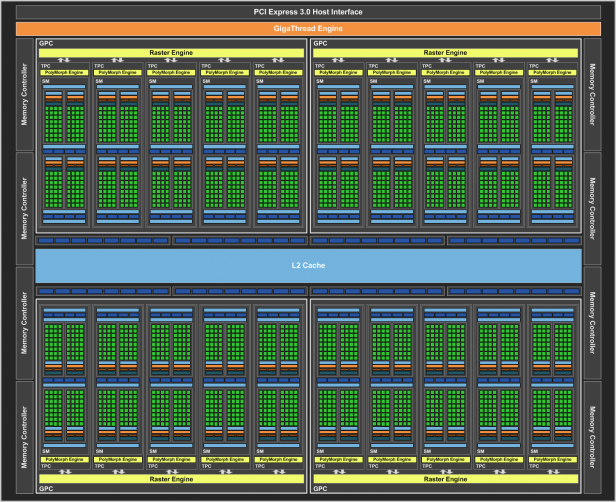

Doing so increases performance with a much smaller effect on power consumption (and therefore heat and noise) than by simply increasing the number of transistors by using a larger piece of silicon. A diagram of the layout of the 1080 GPU. Count the CUDA cores

A diagram of the layout of the 1080 GPU. Count the CUDA cores

For you and me, where this really matters is that it allows for an increase in the number of CUDA cores, which do the bulk of the work. In the case of the GTX 1080, there are 2,560 of them, around a quarter more than the 2,048 on the GTX 980. Clock speeds are higher, too, with the number of clocks per second up from 1,126MHz to 1,607MHz. All this comes with a peak power draw increase of just 15W (from 165W to 180W) over the GTX 980.

Peak power draw for our entire test system was 286W, compared to 270W and 336W on the GTX 980 and GTX 980Ti respectively, and peak temperatures never went beyond 75 degrees on Nvidia’s fairly conservative low fanspeed preset.

Related: The best gaming PC specs you can build yourself

Even those used to big technological leaps can’t fail to be just a little impressed.

It’s not just the processing power that’s improved; we also finally see a new development when it comes to memory. The GTX 1080 uses GDDR5X memory – like the RAM in your PC or laptop, but much faster and more expensive – replacing the GDDR5 found in the previous generation of Nvidia GPUs.

With 8GB of memory versus 4GB in the GTX 980, a higher memory clock of 10,000MHz versus 7,000MHz, the GTX 1080 has 43% more memory bandwidth than the GTX 980. This means there’s more capacity for graphical data and that all of that data can move at a higher speed.

Related: Best desktop PCs

We now come to the physical hardware of the GTX 1080 itself. The Founders Edition card on review here is, let’s face it, probably not the product you’ll end up buying, and by the time you read this review there will probably be several third-party alternative GTX 1080s from the likes of Asus, MSI and EVGA available for less cash.

Still, if you pick up a Founders Edition card, you won’t be disappointed by its design. One member of the Trusted team commented that it looked like a ‘Decepticon disguised as a graphics card’. The sharp metal edges and angular design are certainly attractive and give the GTX 1080 a real sense of occasion. If that’s your sort of thing, you’ll be glad to have it in your rig.

Related: Meet Vulkan, the future of gaming

It’s quiet, too. The single fan might as well be silent – buried in your PC case, there will be plenty of other components noisier than your GTX 1080, although if you choose to overclock (more on that later), you’ll definitely hear it kicking up a fuss if you push too far. During normal use, though, even when running intensive VR titles for extended periods, I barely heard a peep out of it.

In terms of ports, the GTX 1080 is fairly future proof. There’s a single DVI port, an HDMI port and three DisplayPort connectors. The latter is where the future-proofing lies: whatever the final specification of DisplayPort 1.3 and 1.4 are, the GTX 1080 is ready, and will be able to push 4K content at 120Hz, 5K at 60Hz and 8K (yes, really) at 60Hz using two connectors.

The HDMI connector is version 2.0b, which can produce content in 4K at 60Hz.

The GTX 1080 will also support HDR gaming and video playback thanks to its ability to decode HEVC video.

On the software side, Nvidia has come on leaps and bounds when it comes to multi-monitor and VR support. Much of the new tech won’t make a difference to visuals or performance immediately, but once developers begin creating games that support Nvidia’s features, you’ll notice a difference.

Related: Everything you need to know about Intel Core i processors

For example, there’s a form of what’s called asynchronous compute, which is a technology GPU fans have been talking about for the last few years. It’s something AMD has used to good effect in its last few generations of GPUs.

Async compute lets a GPU work on graphics and computing tasks simultaneously, effectively increasing performance by dint of both tasks, which need to be completed together, finishing sooner. Nvidia’s version is called ‘pre-emption’, which is slightly different, and instead of allowing tasks to be done simultaneously, it lets the GPU choose, at a more granular level, which tasks to prioritise.

Nvidia says that we won’t really feel the difference with most current games, but we’ll start seeing a difference when the new DirectX 12 standard becomes more common.

Watch: Your graphics card questions answered – #AskTrusted

There’s also a whole host of optimisations for VR, including Lens Matched Shading, which takes into account any given VR headset and doesn’t render pixels that won’t ever be seen by the user, saving computing power. There’s also Simultaneous Multi Projection, which allows the GPU to render a scene in 16 different viewpoints. This is useful for a fair few pieces of Nvidia tech, including Single Pass Stereo which allows the GPU to render a scene in 3D just once, and then shift it slightly using one of the Simultaneous Multi Projection views it’s already rendered.

This technique also helps with multi-monitor setups where the outer monitors are at an angle. Instead of the on-screen image appearing warped (because games assume three monitors are all in a straight line), developers can enable Perspective surround, so the outer monitors get a less warped-looking image.