Google Lens: Real-time search comes to your phone’s camera

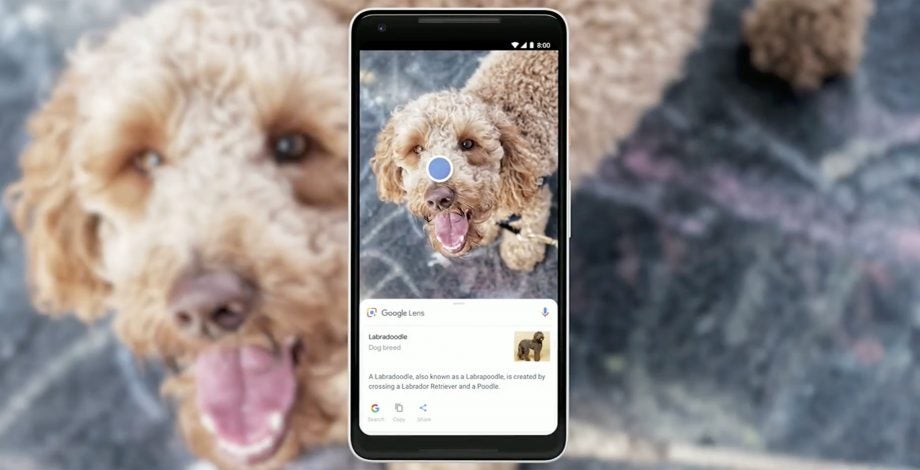

Google has announced an update for its Google Lens visual search tool, which enables users to simply point their camera at things for information about them.

During the Google I/O 2018 keynote, the company announced the biggest update to Lens functionality since its initial rollout last year.

The key new feature is the real-time finder, which will look for searchable items automatically, without users having to tap the shutter.

So, for example, users will simply be able to hover over a book and see an option to view search results, YouTube results and the opportunity to share.

And Lens will soon work in real time. By proactively surfacing results and anchoring them to the things you see, you'll be able to browse the world around you, just by pointing your camera → https://t.co/A1nUSk8zsK #io18 pic.twitter.com/0xNI4dZez8

— Google (@Google) May 8, 2018

“We realised that you don’t always know exactly the thing you want to get the answer on,” says Clay Bavor, Google’s vice president of virtual and augmented reality told Wired.

“So instead of having Lens work where you have to take a photo to get an answer, we’re using Lens Real-Time, where you hold up your phone and Lens starts looking at the scene [then].”

There’s also a new feature called Style Match, which can be used for shopping for clothes and furniture. You’ll be able to point your phone at items such as lamps or a polo shirt, and find a similar product you can buy.

In a few weeks, a new Google Lens feature called style match will help you look up visually similar furniture and clothing, so you can find a look you like. #io18 pic.twitter.com/mH4HFFZLwH

— Google (@Google) May 8, 2018

Lens is gaining the ability to let you lift text from real-world items too. Smart text selection will enable you to point your phone at a restaurant menu or Wi-Fi password card and copy and paste the text it recognises. If you don’t know what one of the dishes on a menu is, it will also bring up a picture to give you a helping hand.

The new beta for Google Lens has a new home. It’s now built directly into the native camera app, rather than sitting within Google Photos.

The new version is initially coming to more than 10 different Android devices. The Google Pixel phones, the new LG G7 ThinQ and the forthcoming OnePlus 6 are among those gaining access, as are devices from LGE, Motorola, Xiaomi, Sony Mobile, HMD/Nokia, Transsion, TCL, BQ and Asus.

On the LG G7 ThinQ, a dedicated Lens button will summon the feature when pressed twice. If the phone doesn’t have a dedicated button, the feature can be accessed via the main on-screen camera options.

How to get Google Lens

Back in March, Google Lens rolled out on iOS following its debut on all Android smartphones – with select handsets also set to receive the option to toggle the feature using Google Assistant in the future.

Announced during Google I/O 2017, Lens debuted on the Google Pixel range in October, although it didn’t enter the mainstream until November when Big G started to bake the tool into Google Assistant on both the Google Pixel and Google Pixel 2.

Google Lens was designed to surface relevant information using visual analysis. It can record information stored on a business card, for example, and even connect to a Wi-Fi network by scanning the credentials embedded in a router’s casing.

Rolling out today, Android users can try Google Lens to do things like create a contact from a business card or get more info about a famous landmark. To start, make sure you have the latest version of the Google Photos app for Android: https://t.co/KCChxQG6Qm

Coming soon to iOS pic.twitter.com/FmX1ipvN62— Google Photos (@googlephotos) March 5, 2018

The feature is being distributed in stages, according to Google. It arrived on most, if not all, Android smartphones on March 5, before starting its rollout for iOS – iPad, iPhone and iPod – the week commencing March 12.

Starting today and rolling out over the next week, those of you on iOS can try the preview of Google Lens to quickly take action from a photo or discover more about the world around you. Make sure you have the latest version (3.15) of the app.https://t.co/Ni6MwEh1bu pic.twitter.com/UyIkwAP3i9

— Google Photos (@googlephotos) March 15, 2018

Related: Google I/O 2018

To start using Google Lens, you’ll need to install the latest version of Google Photos on your smartphone. Open the App Store or Play Store, depending on your handset of choice, navigate into the Updates section, locate Google Photos, then tap Update.

Let us know what you think of Google Lens over on Facebook or Twitter @TrustedReviews.