nVidia GeForce GTX 470 Fermi Review

nVidia GeForce GTX 470 Fermi

nVidia finally releases its first DirectX 11 graphics cards.

Verdict

Key Specifications

- Review Price: £319.99

It’s a tale as old as the industry that the fortunes of one tech company wax while those of another wane. Trends come and go, one technology supersedes another, one left-field, blue-sky idea takes off while another crash lands. It’s no surprise, then, that the two biggest graphics card manufacturers of the last decade have seen their fortunes rise and fall. Of late, it’s been ATI that’s had a good time of it thanks to its Radeon HD 5xx0 series of graphics cards. For just shy of six months they’ve been the clear choice thanks to class-leading performance, features, and power consumption and of course they’re the only DirectX 11 compatible cards on the market. Finally, however, ATI doesn’t have the DirectX 11 party all to itself as nVidia has launched the GTX 480 and GTX 470, two cards based on its latest chip technology codenamed Fermi.

On paper, both these cards look like they should be well on course to take performance to a new level. The GTX 480 has 480 stream processors, which is double that of nVidia’s previous top-of-the-range card the GTX 285, while the GTX 470 has 448. Coupled with an upgrade in memory from GDDR3 to GDDR5 and a whole myriad of architectural changes these cards seem to have everything they need to catch up with or overtake ATI’s best.

Normally when a new range of graphics cards arrives we look at the flagship part first and take that opportunity to analyse the underlying architecture as well. However, due to nVidia having a limited number of review samples, we’ll actually be looking at the slower GTX 470 card in this review. We will still, however, take an in-depth look at the overall Fermi architecture that will be powering this card and nVidia’s entire range for the foreseeable future.

Fermi, then, is the overlying architecture that the chips used inside the GTX 480, GTX 470, and future nVidia cards will be built on. It shares some of the basic elements of the last few generations of nVidia designs but due to the demands of DirectX 11, quite a few elements have been rethought.

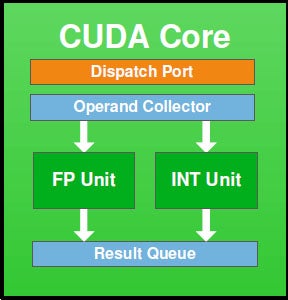

Starting with what is the same, the basic building block of Fermi is still the CUDA Core (Core) or Stream Processor as it used to be known. This little processor is the basic number-crunching unit that does the donkey work in terms of calculations for rendering all those pretty graphics in your games or churning through data for other GPU accelerated tasks like video encoding and ray tracing. However, moving up a level, while things still look vaguely similar they are fundamentally different.

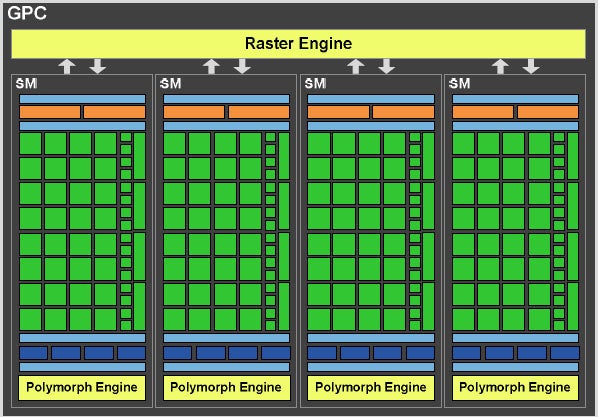

In G80 and GT200 – the chips used to power the 8800GTX and GTX 280, respectively – these Cores were clustered together into groups of eight in what is called a Streaming Multiprocessor (SM). Above this was the Texture/Processor Cluster (TPC), which added texture units and more memory to proceedings. With Fermi, though, an SM now includes 32 Cores and four texture units as well as something called the PolyMorph engine, which requires us to go back to basics to explain.

Contrary to what you might think, a graphics card doesn’t do everything when it comes to rendering a 3D scene on your computer. The CPU actually sets up the wire frame model onto which all the fancy effects you see are then plastered. However, because a CPU is doing so many other things – like AI, physics, animation – at the same time, these wireframes have to be kept quite simple to maintain a decent level of performance. This is why, despite all the advances in graphics we’ve seen in recent years you still get games characters with pointy heads and corrugated iron that when you get up close you realise is completely flat – it’s just too computationally intensive to construct all the triangles required to accurately represent the complex surfaces of a realistic world.

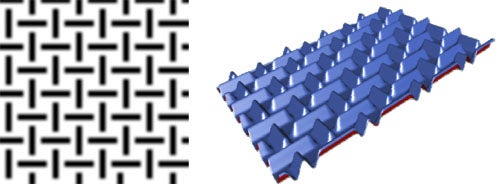

The solution (in part) is to pass over some of the work of creating the basic geometry of a scene to the GPU. This is done using two basic techniques called tessellation and displacement mapping, which make their debut for DirectX-based games with the new DirectX11 API.

D model progression showing texture and detail enhancement” width=”300″ height=”134″ class=”align size-medium wp-image-201777″ srcset=”https://www.trustedreviews.com/wp-content/uploads/sites/54/2010/03/12989-tessanddisplace-1.jpg 500w, https://www.trustedreviews.com/wp-content/uploads/sites/54/2010/03/12989-tessanddisplace-1-300×134.jpg 300w, https://www.trustedreviews.com/wp-content/uploads/sites/54/2010/03/12989-tessanddisplace-1-320×143.jpg 320w” sizes=”(max-width: 300px) 100vw, 300px” />

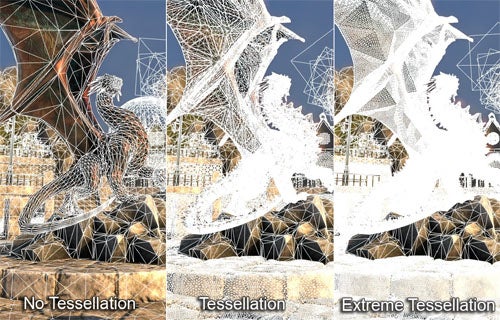

(centre)No Tessellation – Tessellated – Displacement Mapped(/centre)

Tesselation works by simply filling in the gaps between the vertices of a basic wireframe model, creating a much more realistic, smooth surface. It doesn’t add more detail but just gets the model to a stage where it has a more natural look.

Meanwhile, a displacement map is a texture (2D image) that expresses height information that when applied to a model is used to alter the relative position of vertices in the model. This adds all the little details of the model that really bring it to life. The result is infinitely more realistic 3D models that inherently require less other graphical trickery to make them look life-like. Because it’s actually affecting the core geometry as well, other graphical effects, like applying shadows, are greatly improved as they follow the accurate lines of the complex model rather than the basic one, i.e. you don’t get pointy shadows.

The result of the introduction of all this geometry manipulation is that the traditional geometry interpretation section of a GPU has needed reimplementing (or at least nVidia thinks it does; ATI kept things more simple with its HD 5xx0 series), moving from a single monolithic geometry setup stage to multiple. This brings us back to the Polymorph engine as this is the part that manages the geometry calculations for each SM.

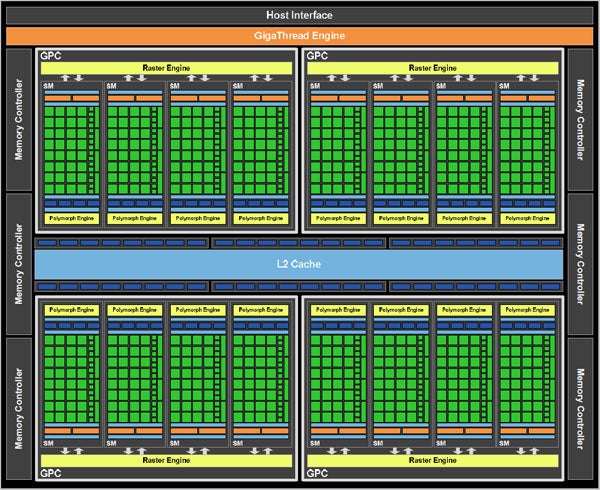

Similarly, Fermi does away with a single rasterisation engine (the part that manages the conversion of all those 3D triangles into 2D pixels) and instead has four, each of which is partnered with four SMs to form a Graphics Processing Cluster (GPC).

Finally, beyond this we get back to a more familiar layout again with the main thread scheduler presiding over the whole affair along with the Host Interface and memory controllers.

As well as these overarching changes, nVidia has also made a host of more subtle improvements. First up, the CUDA Core has improved handling of both single and double precision floating point calculations and meets the new IEEE 754-2008 standard in this regard. Texture units have also been overhauled including a speed bump and support for new texture compression formats. A new large L2 cache reduces the need to address system memory thus reducing latency as well.

(centre)”’Finally we get AA on foliage in Crysis”’(/centre)

The ROP units now support a new 32x Coverage Sampling Anti-alising mode that provides even smoother and more realistic edge blending. There’s also a new super-sampling AA mode that for the first time allows foliage edge smoothing in Crysis. Overall ROP performance has also been improved through a number of compression and efficiency enhancements. Meanwhile, the addition of larger and better implemented caches improves bandwidth to the framebuffer, greatly enhancing ray tracing speed amongst other things.

A rather more esoteric addition is that of 3D Vision Surround. Like ATI, this is an attempt to find a reason for gamers to invest in multiple high-end graphics cards as conventional gaming arguably doesn’t require such expensive hardware. Essentially, it enables you to play games across three monitors each with a resolution of up to 1,920 x 1,080 pixels, just like ATI’s Eyefinity. However, nVidia has gone a step further adding in stereoscopic 3D support as well. So, if you’re willing to buy two GTX 480’s, three monitors capable of fast enough refresh-rates for 3D, and a pair of 3D glasses then the option is there for truly 3D gaming (in fairness, you can also game in 3D on a single monitor). We, however, didn’t have such a setup with which to test.

Fermi then is first being implemented in the GF100 chip which is powering the GTX 480 and GTX 470. It uses four GPCs making for a total of 512 Cores, 64 texture units, 16 PolyMorph engines, and four Raster engines. These are coupled with 48 ROPs split up into six groups of eight, with each group being serviced by a 64-bit memory controller.

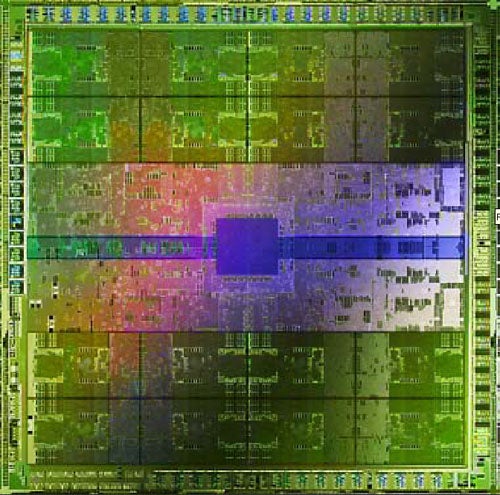

Now the thing to note here is that 512 Cores is more than I mentioned the GTX 480 having at the start of this article. This is because nVidia has disabled one of the SMs, and there can be only one conclusion as to why: nVidia simply can’t consistently make GF100s that work in their entirety. This is a common problem and is generally the reason why graphics cards like the GTX 470 exist. By disabling those part of the chip that aren’t working, you can still get a working chip but it has lower performance. However, it’s the first time we’ve ever seen a company resort to disabling parts of a chip for a flagship product. Such is the risk of making a chip as large as GF100, containing as it does three billion transistors. The clock speeds of these cards are also quite low so if nVidia can improve its manufacturing fortunes there could well be a faster clocked card using the whole, uninhibited GF100 chip in the near future.

(centre)”’nVidia GeForce GTX 480”’(/centre)

For now, though we have two cards available to pre-order (stock will be shipping come April 14th). Prices start at £448.99 for a GTX 480 while GTX 470’s demand £319.99. At these prices, the GTX 480 is some 40 per cent more expensive than the HD 5870 and is only £50 short of the dual-chip HD 5970. As for the GTX 470, it’s the same price as an HD 5870 and £60 more than an HD 5850. At these sorts of prices, these cards are going to need some seriously impressive performance figures to come close to a recommendation.

(centre)”’nVidia GeForce GTX 470”’(/centre)

As mentioned earlier, we’ll be looking at the flagship GTX 480 in due course but for now, let’s take a closer look at the GTX 470. Considering the build up to this launch and the knowledge of how technically advanced its GPU is, the GTX 470 is rather unassuming in the flesh (though, in fairness, the GTX 480 is more of a spectacle). At 9.5in long, it’s the same length as a motherboard is wide so should have no problems fitting in most ATX-size PC cases. It’s also relatively light and has a fairly conventional looking cooler so again should be easy to accommodate.

Thanks to the chip’s 40nm manufacturing process, it has relatively low power consumption for its complexity so requires ”only” two six-pin PCI-E power plugs, so most modern power supplies should have no problem with it. That said, with a total board power of 215W, this card will suck up more juice than even an HD 5870.

ATI’s current crop of high-end cards have four display outputs (two Dual-link DVI-I, one HDMI and one DisplayPort), which means one of them has to encroach on the second card slot, which is normally used to exhaust hot air from the card. However, nVidia has stuck to three display outputs (two Dual-link DVI-I, and one mini HDMI) meaning the full expanse of the second slot is set aside for exhausting hot air.

With the arrival of competing DirectX 11 hardware from both ATI and nVidia, it’s finally time to start comparing DirectX 11 gaming performance. However, we started out testing by performing our usual selection of DX9 and DX10 titles. We tested this card in the usual way, whereby we added it to our reference system, the details of which are below, then ran a series of gaming benchmarks. With the exception of Counter-Strike: Source (CSS) and Crysis, the results are recorded manually using FRAPs while we repeatedly play the same section of the game. For CSS and Crysis we playback time-demos and framerate is recorded automatically. All results are repeated to check for consistency and the average of the results is recorded. For Crysis, all in-game detail settings are set to High while all the other games are run at their highest possible graphical settings.

Considering we’re looking at just the GTX 470 today, we’ve kept our DX11 testing to just two cards, the GTX 470 and HD 5850 as these are supposed to be competing at the same price (even though they’re not currently). We ran three games, Just Cause 2, Colin McCrae: DIRT 2, and Battlefield Bad Company 2. All had in-game graphics settings turned up to maximum and we recorded framerates using FRAPs during manual runs-through.

”’Test System – DX9 and DX10 Games”’

- Intel Core i7 965 Extreme Edition

- Asus P6T motherboard

- 3 x 1GB Qimonda IMSH1GU03A1F1C-10F PC3-8500 DDR3 RAM

- 150GB Western Digital Raptor

- Microsoft Windows Vista Home Premium 64-bit

”’Cards Tested”’

- nVidia GeForce GTX 470

- nVidia GeForce GTX 295

- nVidia GeForce GTX 285

- AMD ATI HD 5970

- AMD ATI HD 5870

- AMD ATI HD 5850

”’Games Tested”’

- Far Cry 2

- Crysis

- Race Driver: GRID

- Call of Duty 4

”’Test System – DX11 Games”’

- Intel Core i7 965 Extreme Edition

- Asus P6T motherboard

- 3 x 2GB Kingston KHX1333C9D3K2/4G PC3-8500 DDR3 RAM

- 2TB Seagate Barracuda XT

- Microsoft Windows 7 Home Premium 64-bit

”’Cards Tested”’

- nVidia GeForce GTX 470

- AMD ATI HD 5850

”’Games Tested”’

- Just Cause 2

- Colin McCrae: DIRT 2

- Battlefield Bad Company 2

—-

—-

—-

—-

—-

Looking first at DX9 and DX10 performance, the GTX 470 certainly keeps comfortably ahead of its predecessor, the GTX 285. However, apart from Far Cry 2, it delivers about the same performance as an HD 5850 and is markedly behind the HD 5870. At current prices this simply isn’t good enough. Yes, in Far Cry 2 it pulls out a healthy lead over both ATI’s cards but one game in four isn’t enough to our minds.

As for DX11 gaming, we see a fairly even split with the GTX 470 holding a consistent lead in Colin McCrae:DIRT 2, both cards delivering about the same performance in Battlefield: Bad Company 2, and the HD 5850 holding a healthy lead in Just Cause 2. However, when we bring in value again, the HD 5850 or indeed the HD 5870 would be the better choice.

Looking at power draw and the picture doesn’t get any rosier for the GTX 470. At idle it’s 10W more power hungry than an HD 5870 and under load it sucks up 66W more. While it may not add hundreds of pounds to your energy bill, it’s certainly not ideal.

It’s a similar story when we look at noise level as the GTX 470 is more noisy than both the HD 5870 and HD 5850 at both idle and when under load. However, it’s worth noting that none of these cards are exactly silent when idling and the GTX 470 isn’t significantly more distracting when gaming than the rest – all these cards make a noticeable whooshing sound.

One thing nVidia does have going for it, is its exclusive technology, particularly PhysX and 3D gaming. The former still crops up as an added extra in some games and it really adds to the spectacle when it does. As for 3D gaming, we still think it’s a bit of a gimmick but with nVidia claiming compatibility with over 400 games already, it could well be set to take off. All told though, neither of these features feels to us like enough to justify the extra cost of the GTX 4×0 cards.

”’Verdict”’

We’ve waited a long time for nVidia’s true next generation graphics hardware to arrive and on a technical level it looks to have been worth the wait. The Fermi architecture packs in oodles of features and certainly has the potential to push forward gaming and non-gaming application performance. However, in the cold light of day, the architecture is all theoretical and so far the final product just doesn’t deliver. The GTX 470 is simply over-priced and under-performing.

Trusted Score

Score in detail

-

Value 6

-

Features 8

-

Performance 8