nVidia GeForce 8800 GT Review

nVidia GeForce 8800 GT

Smaller, faster, and cheaper than the competition. Is this the best DirectX 10 card yet?

Verdict

Key Specifications

- Review Price: £175.00

The arrival of the nVidia GeForce 8800 GTX was close to a monumental event in the history of 3D graphics. It was the first card to support DirectX 10 and its associated unified shader model, it greatly improved image quality compared to nVidia’s previous generation of cards (finally catching up with ATI in this regard), and it blew every other card on the planet out the water in terms of performance. Unfortunately all this power also demanded an enormous price tag and, considering expected competition from ATI and the imminent release of cheaper mid-range cards based on the same technology, the GTX was deemed a card for the bleeding edge enthusiast only.

To remedy this nVidia launched the 8800 GTS 640MB the following month and the 8800 GTS 320MB a couple of months later still. They both offered close to the awesome performance of the GTX but at a much more reasonable price. However, at around £250-£300, they were still a bit too expensive for the gamer on a budget – these were lower high-end cards, not true mid-range parts. Of course, had we the benefit of hindsight we would have insisted that a GTS was worth saving every penny for because what followed throughout the rest of 2007 was one disappointment after another.

First came nVidia’s supposed mid-range parts, the 8600 GTS and 8600 GT, which were massively cut down versions of the 8800 series. They were smaller and quieter than the 8800 series, and had great new HD video processing capabilities but their gaming performance was way below expectations and try as we might, we just couldn’t see fit to ever recommend them, even though they were quite cheap. We then had ATI’s Radeon HD 2900 XT, which was level with the 8800 GTS 640MB in terms of performance but consumed a huge amount of power when under load and was still too expensive to call mid-range. Finally we had ATI’s attempt at true mid-range DX10 cards, in the shape of the HD 2600 XT and HD 2600 Pro, which were even better in terms of multimedia capabilities than nVidia’s 8600 series, but again lacked the horsepower to make for a worthwhile upgrade for any gamer with a previous generation card like the X1950 Pro or 7900 GS.

All of which brings us to now, a full year after the 8800 GTX first arrived, and the launch of the 8800 GT, the first true “refresh” of any of the DirectX 10 capable graphics card. It has been a long time in the making but with specs that rival the 8800 GTS and high street prices hovering around the £175-£200 mark, this could finally be the mid-range card we’ve all been waiting for. But before I evangelise the card too much, I should probably explain a bit more about what makes it so special.

As technology marches on and the transistor count of CPUs and GPUs alike continues to rise, there is a natural push for ever smaller transistors. The reduction in size brings with it less power consumption, which in turn means the chips don’t run as hot, and, because they produce smaller chips, more of them can fit onto each silicon wafer, reducing the relative manufacturing cost of each chip and theoretically giving us lower high street prices for our hardware. However, changing manufacturing processes can be a high risk business, which is why convention has it that manufacturers will release brand new architectures on existing tried and tested processes, as was the case with the 8800 GTX and HD 2900 XT. As the architecture then matures, with the introduction of lower powered mid-range parts and later refresh parts, the newer manufacturing process can be introduced.

It’s this path that the 8800 line has followed with the G80 core at the heart of the 8800 GTX and GTS being made using a 90nm process and the 8800 GT being powered by the G92 core that’s made using the newer 65nm process. Now the change may not seem like much but it actually equates to a 34 per cent reduction in size for any given chip design, or conversely a 34 per cent increase in space to manufacturer more chips on each wafer. The upshot of which is smaller, cheaper, less power hungry chips, and that can only be a good thing. However, the G92 isn’t just a die shrink, there’s a little more to it than that.

For a start, the video processing engine, dubbed VP2, that was featured on the 8600 range, has now found its way into the 8800 GT. So you can enjoy high-definition video without your system grinding to a halt. The final display engine that was controlled by a separate chip on the 8800 GTX is also now incorporated into G92. The result is a chip that actually has 73M more transistors than the 8800 GTX (754M vs. 681M) yet still has fewer stream processors, texture processing power, and ROPs than its more powerful brother.

A new version of nVidia’s transparency multisampling anti-aliasing algorithm has also been added to the 8800 GT’s arsenal, which should greatly improve image quality using this mode, while also maintaining great performance. Apart from this, though, there is little in the way of new graphics features with the new processor.

nVidia has evidently thought long and hard about exactly what areas of the previous 8800 cards were being under utilised and could be scaled back, along with which were more important to maintain. The result is a GPU design that sits somewhere between the 8800 GTX and 8800 GTS in terms of performance but with the features of the 8600 GTS thrown in. To all intents and purposes, this makes the 8800 GTS completely redundant, and the Radeon HD 2900 XT isn’t in that great a position either (we’ll just have to wait and see whether ATI’s upcoming 3850 and 2870 can change that situation though). Moreover, the 8800 GTX and Ultra should still offer more performance but have fewer features, cost significantly more and are more power hungry. The 8800 GT really is building a strong case for itself.

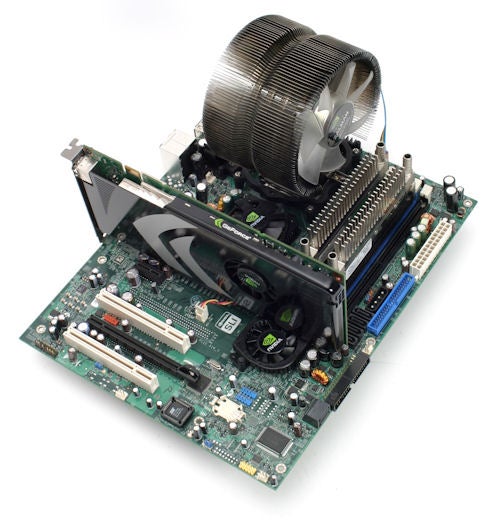

If it weren’t something even my colleagues would frown upon, I’d be inclined to say that the 8800 GT is a sexy card. The new cooler design is just very pleasing on the eye and, much as with the internal refinements of the G92, it just emanates the feeling of a matured design. It certainly looked the part on our nVidia branded test bed, anyway.

More important than the aesthetic aspects of the design, though, is the simple fact that nVidia has managed to squeeze all this power into a single slot card, which is not only a welcome change but really quite surprising. In fact, I dare say that having learned something of the specs of the 8800 GT, most people would’ve bet significant money on it requiring a dual slot cooler.

The reason why nVidia can get away with using such a slim design is the change in manufacturing process, which reduces the thermal envelope of the card to a level that a single slot cooler can cope with. In fact, it’s so thermally accomplished that the relatively small fan doesn’t even need to spin very fast to keep things cool, resulting in a card that remains near silent even during intense gaming. It does get quite hot to the touch and will require a decent amount of airflow within a case to prevent overheating, but in our open air test bed it didn’t become hot enough to require the fan to spin excessively.

Also as a result of the smaller manufacturing process, the 8800 GT consumes only 105W, even under full load. Therefore, only a single six-pin power connector is required to get the beast going at full pelt. This is another nice change and it’s a development we hope will turn into a trend not just a one off.

Output options are pretty standard fair with two dual-link HDCP enabled DVI-I sockets allowing for both analogue and digital connections to PC monitors and HDTVs, while a seven-pin analogue video port provides the usual composite and component options. The DVI connections can be used in conjunction with DVI-to-VGA and DVI-to-HDMI dongles so every connection option (bar the upcoming DisplayPort) is supported. However, nVidia is still choosing to make audio pass-through (for use with HDMI connections) an option for 3rd party vendors to implement rather than make it a requisite like ATI has. That’s a shame, considering this slim, cool, and quiet card could be perfect for a gaming biased HTPC.

The 8800 GT is actually the first graphics card ever to be PCI Express 2.0 compliant, which means it can communicate with memory at a rate of 16GB/sec – twice the previous standard. Though this may be of use to workstation applications and GPGPU computing situations it is largely going to go unnoticed to the average gamer, though this may change in the future. Either way, the standard is completely forwards and backwards compatible with all the previous versions of PCI Express so it isn’t something to worry about.

All the usual board partners will have stock available over the coming weeks. Most seem to be offering standard clocked and overclocked versions and there are also some bundled game offers around to sweeten the deal. We’ll be sure to get some of these cards in for testing over the coming weeks. For now, though, let’s take a look at how the reference board performs.

With the launch of some truly stellar gaming titles in the last few months, some of our tried and tested graphics benchmarks are looking a bit long in the tooth. So, for this review I’ve dropped Counter-Strike: Source in favour of Team Fortress 2, and I’ve added in the Crysis demo to see how these cards cope with the latest most demanding of games. Call Of Duty 2, 3DMark06, and Prey are still in there for the time being but this will probably be the last time they will be used for testing – we just need to iron out the kinks in our test code before the new benchmarks can be let loose.

As per usual, each game is run with full in-game detail settings at a variety of resolutions with varying degrees of anti-aliasing and anisotropic filtering applied. For Call Of Duty 2, Prey, 3Dmark06, and Team Fortress 2, each setting is run three times and the average is taken so we end up with a pretty rock solid figure. For Crysis we’ve used the inbuilt timedemo that loops four times enabling us to calculate an average from the results. As it’s such a demanding game, though, we’ve kept in-game settings to high (rather than very-high) and also stuck to resolutions of just 1,280 x 1,024 and 1,680 x 1,050. We also use transparency anti-aliasing throughout our testing as we feel its a processing technique that greatly enhances image quality in a lot of games.

Our test platform consists of the following:

We generally find that any single card configuration struggles to cope at the resolutions demanded by a 30in monitor so we’ve stuck to testing at 1,920 x 1,200 and 1,600 x 1,200 (or 1,680 x 1,050), and 1,280 x 1,024 for these tests. We will, however, come back to test SLI and CrossFire configurations very soon so we will take a look at performance at 2,560 x 1,600 then.

As was to be expected, the 8800 GTX is still top of the pile (well, except for the 8800 Ultra, but we haven’t tested that here) but overall the 8800 GT makes a good show of itself, most notably staying ahead of the 8800 GTS for the majority of tests. At the highest resolutions and anti-aliasing settings, the reduced memory bandwidth of the 8800 GT lets it down and the 8800 GTS just creeps ahead on the odd occasion. However, considering the price difference, and all the other benefits of the new card, the 8800 GT would be the better bet every time.

Conversely, the 8800 GTX does enough to show why it still demands such a high price. While the other cards begin to drop off significantly as the resolution, anti-aliasing, and anisotropic filtering are cranked up, the 8800 GTX takes far less of a hit. In particular, Team Fortress 2 at 1,920 x 1,200 with 8xAA and 16xAF is nearly twice as fast with the 8800 GTX as it is with the 8800 GT. However, for the most part the 8800 GT is more than capable.

Of course none of this takes into account the incredibly poor framerates that all the cards produce when playing Crysis. Hopefully the full game will be slightly more optimised, while future driver updates from both ATI and nVIdia should also improve the situation. Otherwise, Crysis could prove to be unplayable for most people, which would be a huge shame because it looks incredible.

”’Verdict”’

While the nVidia 8800 GT doesn’t beat the established 8800 GTX, it provides close to the performance for a fraction of the price and also packs in more features to sweeten the deal. Add to this it’s small size, cool operation, and quiet running and you have a card that we can’t recommend highly enough. It’s simply phenomenal.

We had some anomalous results when 4x anti-aliasing was enabled on the 8800 series cards in Prey and Call Of Duty 2. This was down to the cards’ occasionally not displaying textures correctly which actually improves performance because there’s less post processing to be done. It’s a known issue and one we’ve yet to solve but I’ve included the results anyway, as you may also find you have similar issues.

Trusted Score

Score in detail

-

Value 10

-

Features 10

-

Performance 9