New Adobe Photoshop feature could save the world from deepfakes

Photoshop has been coming to the rescue of photographers and assisting pranksters for a generation. Now Adobe is hoping the tool can protects us all from the faked images that are a little too real for comfort.

The company is working with high profile partners like Twitter and the New York Times on a Content Authenticity Initiative that could add new levels of metadata to the image that certifies it’s the real deal, Wired reports.

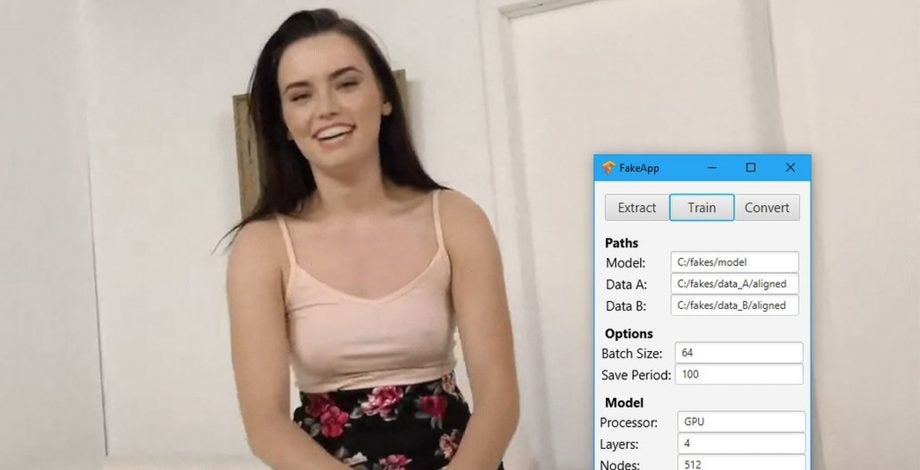

The imaging software giant is looking to combat the deepfake videos and other image manipulation tools, which can be used to make it appear someone has spoken or acted in a manner they have not. The practice has been deemed an existential threat due to the ability for world leaders and other important figures to fall victims to the fakery. The below is a terrifying example.

Related: Best photo editing apps 2020

The new tool would effectively be like a car service record for the image. It would include the camera used, photographer, a record of the edits and publishing history. The system could be used to allow social media users to be able to perform a sort-of fact check on the image. New tags could be added as time goes on.

Abobe says the technology is coming to a preview version of Photoshop before the end of the year. The company hopes it will help to stem the flow of misinformation online.

“We imagine a future where if something in the news arrives without CAI data attached to it, you might look at it with extra skepticism and not want to trust that piece of media,” says project lead Andy Parsons.

The report points out that while the major news organisations will probably welcome this, it will have less of an impact on user-generated viral content that isn’t based on a certified image.

Marc Lavalee, the head of R&D for the New York Times added: “People are getting more comfortable seeing those signals. If we can pick off people who are well-intentioned and about to unwittingly share misinformation, that’s a great starting point.”