Samsung CHG70 Review - Samsung CHG70 – HDR Review

Samsung CHG70 – HDR

Samsung CHG70 – HDR explained Although there’s plenty more to this display, its big standout feature is the inclusion of HDR. This technology has been around in TVs for a while, but it’s taken some time to arrive in monitors due to the lack of support in Windows and the general messiness that is PC […]

Sections

- Page 1 Samsung CHG70 Review

- Page 2 Samsung CHG70 – HDR Review

- Page 3 Samsung CHG70 – Image Quality, Gaming and Verdict Review

Samsung CHG70 – HDR explained

Although there’s plenty more to this display, its big standout feature is the inclusion of HDR. This technology has been around in TVs for a while, but it’s taken some time to arrive in monitors due to the lack of support in Windows and the general messiness that is PC hardware.

For a full explanation of HDR read our HDR TV guide, but in a nutshell it concerns making the images of a display look closer to real life. This means more colours, greater contrast, darker blacks and higher brightness.

For instance, take an image of a bright moon on a dark sky. With HDR the moon will shine bright and be full of detail, while the sky will be inky black. With standard definition the sky may appear grey and the moon less bright, and both will have less detail.

The most common HDR standard is HDR10, which specifies that images can be mastered for use on displays with a brightness of up to 1000nits – or, in some cases, 4000nits; the even more demanding Dolby Vision standard aims for 10,000nits. In contrast, standard Blu-rays are mastered for 100nits, and most laptop screens top out at 300 nits. This provides some indication of just how much wider the range is with HDR.

Also crucial is the overall contrast, which is the difference between the brightest and darkest elements on-screen. HDR10 specifies this should be 20,000:1; however, this is where the whole HDR thing becomes rather confusing. You see, a display doesn’t actually need to be able to deliver that contrast ratio in raw terms, but rather it needs to be able to process it.

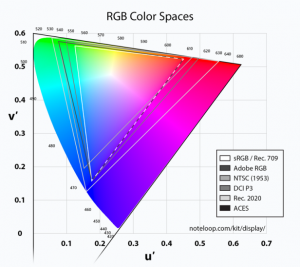

This is also true of colour accuracy. Standard-definition content is based on the sRGB (or Rec 709 for Blu-rays) colour space, which uses a colour bit-depth of 8-bit. That is, the range of colours covered by the display and the number of differences between those colours. Meanwhile, HDR10 specifies that a display must be able to handle the larger DCI-P3 colour space (within the Rec 2020 container) and finer 10-bit colour. So not only can the display show a greater range of colours, but it can display finer differences between them too.

But again, the display doesn’t actually have to show all these things but simply be able to process the information.

So, theoretically, you could have a completely normal SDR TV or monitor, in terms of its raw imaging performance, but it could be called HDR as it can process the information.

All of which brings us to the Samsung CHG70. Technically it delivers a true HDR10 experience, but like most HDR monitors and TVs, its performance meets only some of the figures mentioned above.

For a start, it can produce 10-bit colour with 95% DCI-P3 colour space coverage (equivalent to 125% sRGB), but its 600nits maximum brightness is some way off the 1000nits specified; nevertheless, it’s a good deal more than the 300-400nits of a typical display. Incidentally, that 600nits is only available when in HDR mode, and the display delivers a more typical 350nits in SDR mode.

As for its contrast, again, it’s technically well short of 20,000:1. However, with a native contrast ratio of 3000:1, it’s three times better than the typical 1000:1 ratios seen with IPS or TN LCD-based monitors. Native contrast for LCD displays is the difference in brightness level between the brightest and darkest parts of an image when the backlight is at a constant value.

Samsung also employs a form of local dimming of the backlight, which is where different portions of the backlight shine at different brightness levels to increase the dynamic contrast of the display. However, the company hasn’t explained how this works, nor how many lighting zones there are or what the equivalent contrast ratio is, so it’s difficult to determine how close it gets to that 20,000:1 figure.

Making all this even more complicated is that HDR support in Windows is currently a mess. The latest Windows 10 update now supports the feature, but turn it on and even on a display that supports HDR it will make your desktop look dull and drab.

I won’t get into the reasons for t his here, but the short version is that you should only turn on the feature if you’re specifically playing a game or watching a video that supports HDR. Otherwise, it should be left off.

Meanwhile, if you’re watching HDR content through a Blu-ray player or console, the display should just recognise it and play it properly.

Samsung CHG70 – HDR performance

So how does the CHG70’s HDR look? Well, the native contrast ratio, high brightness and local dimming combine to great effect, providing an excellent representation of what HDR is all about.

The contrast of the display adds a depth and richness to its image that most monitors simply can’t match. Bright skies, headlights and any other bright objects shine brighter, and dark areas look darker.

With HDR

Without HDR

The greater colour depth is clear to see too. View a bright or dark part of an image and all the subtleties are visible; on an SDR display, it will just be a single mass of the same colour.

It’s early days for HDR, and doubly so for HDR on PCs, but the CHG70 looks like it should remain competitive as more HDR monitors arrive. Its sheer contrast performance and colour accuracy is well ahead of typical monitors, and the only obvious way of getting better would be with OLED technology or LCD with Full Array Local Dimming (FALD), where instead of a single backlight you have dozens (or hundreds) of individual backlights. Both these technologies can deliver even greater contrast, but both are still very expensive.