Leadtek WinFast PX8800 GTX TDH Review

Leadtek WinFast PX8800 GTX TDH

It's the fastest graphics chip on the planet. Enter the nVidia GeForce 8800 GTX.

Verdict

Key Specifications

- Review Price: £470.00

The winds of change are blowing and blowing hard. Windows Vista is due to launch on January 30th and with it brings a whole host of “features” that I’m sure will increase our productivity no end. Or perhaps not, we’ll have to wait and see. What is clear is that Vista will bring with it DirectX 10, which will not be ported to Windows XP.

That means, in order to run DirectX 10 games at their very best, you will need a DirectX 10 capable graphics card and a licensed copy of Windows Vista. There is also no guarantee that games will have backwards compatibility with DirectX 9, but the chances are high.

DirectX 10 alters a lot of things, but the biggest change is the introduction of a geometry shader. This means that geometry is no longer processed on the CPU, but instead created directly on the GPU where it can then be manipulated or destroyed. This is a natural progression and lends itself easily to having physics processed on the GPU as well. With this in place, a lot less data is being inefficiently transferred across the PCI Express bus.

With more and more load being taken off the processor, Intel’s vision of us all running quad-core processors might be badly thought out. In reality, we might end up buying the cheapest Celerons we can get our hands on in order to afford a better graphics card. Perhaps AMD has demonstrated greater prescience by buying ATI.

Bearing all this in mind, I take a look at nVidia’s first DirectX 10 part and indeed the first in the world – the GeForce 8800 GTX. The card I was provided with for review was courtesy of Leadtek.

As you can see, the card looks identical to our reference card, but is a little prettier. This is the case for all board partners at the moment, but expect to see some different solutions over the coming months.

As this is a retail card, it also comes with two Molex to PCI Express converters and a Component/S-Video output cable. It comes with a copy of SpellForce 2: Shadow Wars, TrackMania Nations and PowerDVD 6.

Cooler Master also went out of its way to provide us with the RealPower 850W. This is quite a new venture for Cooler Master to go this high on the power front. It has six 12V rails, all capable of 20A, but obviously not simultaneously. Importantly, it has four PCI Express connectors, each coming off a different rail. It will be priced at around £170.

If you hadn’t noticed above, G80, or 8800 GTX as it’s better known, requires two PCI Express connectors. The power draw on these cards is not significantly higher than previous generations, but as part of the PCI Express specification, above a certain power draw, a second connector is required.

In actuality, ATI’s X1900 series should have had two connectors as well. That is why finding a power supply that would work in CrossFire was next to impossible. Only the units that didn’t automatically switch off when more than 20A was drawn would work, which ironically was often the cheapest units – as this is a mandatory safety feature of the ATX specification.

The G80 processor works in a very different way to previous generations. Take the GeForce 7900 GTX as an example – it has 24 pixel shader pipelines and eight vertex shaders. Each of these units is designed to do a specific task and nothing else. Naturally, this 24/8 distribution is based on analyzing games and how they distribute their code. However, there will be scenarios where the pixel pipelines will be under utilised and the vertex shaders working overtime; and visa versa.

This has the effect of the chip running inefficiently because of bottlenecks. Wouldn’t it be nice, if unused units could lend a hand in other areas?

That is where G80 architecture comes in. Instead of specific function units, it has 128 “streaming processors”, which can perform any tasks necessary. This means they can be pixel shaders, vertex shaders, geometry shaders, or even perform other functions like processing physics. This is what is known as a “unified shader architecture”.

This should mean that in a gaming environment, every unit is working on the scene and it is purely a matter of distribution. By having all the units working, this should severely reduce internal bottlenecks and increase frame rates dramatically.

Although this simplifies the difference from card to card, it does add in another confusion – a second clock speed. The core of the 8800 GTX runs at 575MHz, but the streaming processors run on a different clock of 1.35GHz. Although previous cards also had multiple clock speeds, only now have they decided to make these known (and adjustable).

The GeForce 8800 GTX has 768MB of GDDR3 memory, running at 900MHz (1,800MHz effective) on a 384-bit interface. That’s quite an astonishing amount of bandwidth and a fairly sizable frame buffer to store data in too. It’s interesting to see nVidia hasn’t chosen to move to GDDR4, but evidently they don’t see it as necessary yet. Naturally, the G80 processor has support for it.

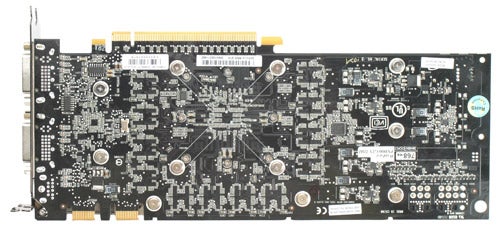

nVidia has stayed with a reliable 90 nanometre process for G80. With all the new technology, this has made for a large chip and therefore a large board. As you can see above, it’s larger than the already large 7900 GTX. It’s actually longer than the motherboard we used for testing, which may cause problems in a number of cases. Most of the extra size is power regulation for the power connectors. It’s quite bulky too. However, the cooling solution that nVidia has employed, and used here by Leadtek, is superb. Despite the heat that the chip must be emanating the fan always ran quietly, which is a major boon for anyone wanting to build a system around one or even two of these.

You might also notice an extra connection for SLI. This is something we noticed on ATI’s X1950 Pro, which follows the same principle. By having two connectors, there is bandwidth in both directions and more of it.

With the chip being completely remade, nVidia has added support for simultaneous HDR and FSAA – an area ATI has previously been alone in supporting. On top of this, overall image quality has been improved considerably. As well as the normal timedemo testing I spent the best part of a day checking out the image quality in top games. Without a doubt, there is a huge improvement in this area.

Windows Vista isn’t out yet, either is DirectX 10, or any DirectX 10 games. So although we can test this is current environments, it’s really missing the point and will have to be revisited next year, with an obvious update to our testing routine.

For testing this card, I used our reference Intel 975XBX “Bad Axe” motherboard, with an X6800 Core 2 Duo. Coupled with 2GBs of Corsair CMX1024-6400C4 running at 800MHz 4-4-4-12. My X1950 XT-X and GeForce 7950 GX2 benchmarks where taken straight from my X1950 XT-X review, in which I used WHQL 6.8 Catalyst drivers, and WHQL 91.31 drivers.

For the 7900 GTX and the 8800 GTX, I used the newer 96.94 drivers. The Counter-Strike portion of the testing has changed slightly, so these results can’t be compared to the older cards and have therefore been removed from the graphs.

I ran Call of Duty 2, Counter Strike: Source, Quake 4, Battlefield 2, Prey and 3DMark06. Bar 3DMark06, these all run using our in-house pre-recorded timedemos in the most intense sections of each game I could find. Each setting is run three times and the average is taken, for reproducible and accurate results. I ran each game test at 1,280 x 1,024, 1,600 x 1,200, 1,920 x 1,200 and 2,048 x 1,536 each at 0x FSAA with trilinear filtering, 2x FSAA with 4x AF and 4x FSAA with 8x AF.

Performance is quite simply superb and in most cases it is 50-100 per cent faster. At 1,280 x 1,024 the difference wasn’t quite as obvious as in most cases it was CPU limited. As per usual, it was in the upper resolutions, or when AA was switched on that the differences were apparent. Quite frankly, the 21 graphs at the end of this review speak for themselves.

”’Verdict”’

Without a doubt, this is the fastest graphics card currently available, with the best image quality we’ve ever seen.

At this stage, we can only predict what DirectX 10 performance would be like. So I would only recommend buying this card if you really want or need the best possible DirectX 9 performance and image quality, say of you’re running a 24in display or Dell’s 30in monster. If you are aiming for DirectX 10, wait until next year, when we will be able to test on Windows Vista and compare to ATI’s rival card.

For such a radically new architecture, I can only be impressed with how problem free the move to a unified shader architecture seems to have been. When SLI is combined with DirectX 10, I can even see scaling getting closer to a 100 per cent performance increase than ever before.

This is an amazing leap forward for nVidia and for the graphics industry as a whole.

Trusted Score

Score in detail

-

Value 7

-

Features 10

-

Performance 10