AMD ATI Radeon HD 3870 X2 Review

AMD ATI Radeon HD 3870 X2

ATI bolts two of its fastest graphics chips together on one card. Is it madness or sheer brilliance?

Verdict

Key Specifications

- Review Price: £280.00

ATi’s had a rough time of it over the past year or so with every single one of its last generation cards failing to live up to expectations and nVidia’s equivalents consistently beating them on performance. However, with the release of the HD 3870 and HD 3850 at the tail end of last year, ATI looked to be on the up again. With decent performance, unbeatable features, and competitive prices these cards made for a worthwhile alternative to nVidia’s mainstream and enthusiast products.

Unfortunately, while the big money is to be made in these mid-range (£50 – £150) markets, something called the halo effect means that having the fastest card available still has significant influence over the general public’s buying habits. Put simply, if someone hears one company makes the fastest card available, they’re going to assume the other products the company produces are also the fastest in their respective sectors. The fact this mentality is completely ridiculous causes much frustration for manufacturers and journalists alike but there’s nothing we or they can do about it so manufacturers will always seek to gain the performance crown just to convince these people.

The problem for ATI is that modifying or augmenting R670 (the chip at the core of the HD 3870) to make it significantly faster would require a complete redesign of the underlying architecture and for a number of reasons this is something it simply isn’t in the position to do.

An architectural redesign requires a significant investment in research and design and rushing this would cost large amounts of money and potentially lead to mistakes, which would be even more catastrophic than not releasing anything. Also, releasing a completely new chip now would’ve cut into ATI’s plans for its next generation of hardware and potentially disrupted sales of cards based on what would be perceived to be its ‘old’ architecture.

So, if a brand new chip wasn’t the solution, how could ATI leverage its current capabilities to create a single competition-beating graphics card? Simple, it stuck two R670 chips on one card and ran them in Crossfire. The result is called the HD 3870 X2.

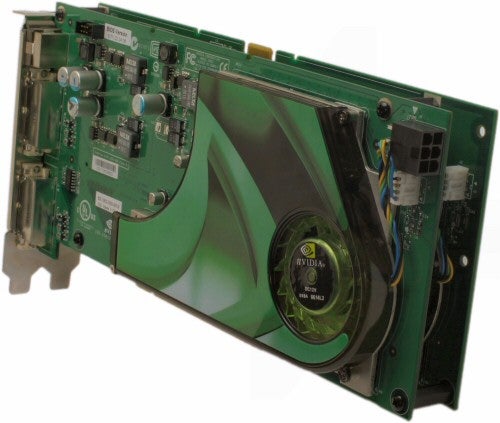

The first thing you’ll notice about the X2 is the size of the card. At 265mm in length it is as long as an 8800 GTX or 8800 Ultra and with its standard cooler attached it weighs a colossal 1.023 KGs, which is actually significantly heavier than either of the above nVidia cards.

The reason for the extra weight is mainly due to the extra metal used in the cooling mechanism which, unlike the coolers used on nVidia’s high-end cards, includes a section of heatsink that covers most of the back as well as the front. Also, the front heatsink is considerably longer than anything we’ve seen before and it incorporates a lot of copper, which is heavier than more oft used aluminium.

There’s actually a fair bit of science going on with the stock cooler on this card. According to ATI it has been developed with “enhanced weight management” in mind, which is a term I’m sure you’re all familiar with – no?

Basically what this amounts to is the heatsink uses a combination of copper and aluminium to distribute the weight of the card so the extreme length of the card doesn’t lead to it pulling itself out its PCI-E slot. Whatever it’s called, it seems to work and the card sat secure and happy in our test bed. Of course, the big test will come from mounting it in a tower case, where the card is no longer resting on the motherboard but hanging from the PCI bracket and PCI-E slot. You’ll have to make sure it’s securely screwed in place or we can guarantee you’ll end up with either a snapped PCI-E connector on your card or a PCI-E slot ripped from your motherboard.

Like most large graphics cards, the X2 uses a centrifugal fan to suck air from inside the case and blow it over the heatsinks and out the back of the card. As we would have expected, it performed very well, remaining reasonably quiet when under load and to all intents and purposes becoming silent when the card was idling.

One last note on the cooler, we’ve seen at least one card partner going a completely different route to the ATI stock cooler by removing the heatsink on the back and replacing the single exhaust fan with two fans that blow down onto the card. We’ve given this card a quick run through (expect a full review soon) and these changes don’t seem to affect performance but they have made the card considerably lighter, which is no bad thing.

So, finely we come to the all important testing, which this time is a bit different to usual. Bearing in mind the previous page’s caveats about how this card is likely to perform we have extended our game testing just this once to include five of our favourite games of the last year or so. It’s not a comprehensive list and there’s every chance you may find a game that doesn’t play nice with the X2, but we reckon if it can get through our extended list of titles it shows the X2 is well on its way to being a viable solution.

New to the table are Bioshock (our Game Of The Year), The Elder Scrolls IV: Oblivion, World In Conflict, Call Of Duty 4, and Supreme Commander. Each game is run at three resolutions – 1,680 x 1,050, 1,920 x 1,200, and 2,560 x 1,600 – with anisotropic filtering set to 16x and multi-sampling transparency anti-aliasing turned on. Due to time constraints we’ve only tested at two full screen anti-aliasing settings – off and 4x – where applicable but we feel that should be enough to demonstrate things nicely.

We used a combination of in-game benchmarks and manual run-throughs, using FRAPs, to record results and each setting was repeated three times and the average taken, to ensure a fair and accurate final figure.

As can clearly be seen, the X2 and 8800 Ultra take turns in claiming top spot with the Ultra probably just coming in ahead overall. What’s important to note, though, is how relatively small the increase in performance from the single 3870 to the X2 is for many of the games. Sure there’s a good 50% increase for most games but considering the card contains twice the processing power it’s reasonable to hope for more.

This is potentially both a good and a bad thing as it means there could still be performance to be gleaned from the card through a bit more optimisation. On the flip side, however, this could be as good as it gets, in which case it’s difficult to recommend the HD 3870 X2 on performance alone, which is why it’s lucky for ATI we also take into account price in our evaluation.

With 8800 Ultras still demanding the best part of £400, the HD 3870 X2 is actually a good wedge cheaper – certainly there’s enough of a saving to buy yourself a couple of games. This brings it more in line with the 8800 GTX which, although not tested in all these games, is generally around ten percent slower than the Ultra in any given game. With this in mind, the HD 3870 X2 suddenly becomes a much more tempting proposition. Moreover, the extra multimedia functionality of the X2 certainly puts it ahead of either the GTX or Ultra.

That said, we still wouldn’t out and out recommend you go out and buy the HD 3870 X2 because there’s just a nagging doubt in the back of our minds that, no matter how much ATI assures us, driver support for the latest and greatest games or for more obscure titles is going to be slow to arrive, if it ever does. Sure, if you have a spare £300, you are in the market for a graphics card, and you don’t already have a 8800 GTX or 8800 Ultra, then the X2 would be a good purchase but if you’re a bit hard up, it’s probably not worth losing any limbs over.

”’Verdict”’

The ATI HD3870 X2 is probably the most convincing example of multi-GPU on a single card we’ve ever seen with it often beating the long standing performance champion, the nVidia 8800 Ultra. However the heavy reliance on after market support and co-operation from games and driver developers means there’s always going to be the risk the latest games won’t play nice with this card. So, for that reason we can’t unreservedly recommend it.

Before we move onto how the HD 3870 X2 performs, we should really talk a bit more about the merits of this whole multi GPU idea because although multi-GPU solutions have been around for a long time they’ve never really managed to convince completely, at least perhaps up until now.

We’ve seen a number of dual GPU graphics cards come and go over the years but they’ve seldom stuck around for long, been readily available, or used particularly elegant designs. However, it’s none of these issues that have been the real sticking point of dual (or multi) GPU solutions. No, the real killer is the variable nature of their performance.

(centre)”The nVidia GeForce 7950 GX2 is one of the more successful examples of dual-GPU on one card.”(/centre)

The problem is that for a multi GPU solution to work properly you need software – both drivers and games – to support it. If that support isn’t there, at best performance will only equal what you’d get using a single card and at worst it could actually make performance worse than a single card or even cause the game to not work at all.

Now, these issues can and generally are all fixed eventually but it can take weeks or even months for new drivers or game patches to be released and, if you’ve just spent a few hundred pounds on a new graphics card, having to wait even a week is not going to sit well.

(centre)”Can the AMD ATI Radeon HD 3870 X2 prove to be a reliable multi-GPU solution?”(/centre)

Of course, ATI has promised that supporting the X2 will be of utmost priority with ever more co-development work being put in to ensure games work properly straight out of the box. Moreover, it has also been suggested that multiple GPU is here to stay and we will be seeing single card-multi GPU solutions more and more often as both nVidia and ATI look for more ways to push the performance envelope. So, hopefully multi-GPU compatibility issues will be a thing of the past.

All we know today though is that performance will vary wildly depending on what games (or other programs, for that matter) you’re running. During our testing we encountered problems with both Call Of Duty 4 and Enemy Territory: Quake Wars and, although we eventually got figures that looked correct, it took a fair amount of toing and froing with ATI and tinkering with our test system to get to that stage and even then the performance was mediocre at best. More to the point though, we had no such problems with any of the single cards.

Ultimately, whether you’re willing to take the risk depends on your gaming habits. If you’re the type that wants the latest games the day they’re released then there’s a very real chance, the HD 3870 X2 may not deliver the performance you expect immediately. On the other hand, if you’re a bit more relaxed about your gaming and are willing to wait a while for new drivers or game patches, if they’re needed, then the HD 3870 X2 will suit you fine.

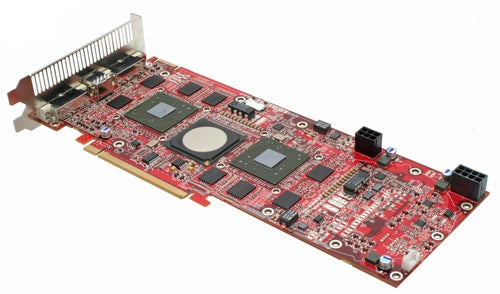

As the cores that power the HD 3870 X2 are exactly the same as those used in the HD 3870, we won’t talk in detail about the features to be found therein. However, we will just reiterate that, by virtue of it using two R670s, the R680 (as the HD 3870 X2 is codenamed) features 640 stream processors split into 10 clusters of 64 and also has 32 texture units and 32 render output units (ROPs). It also incorporates all the new PowerPlay power saving features, although inevitably it still consumes about twice the power of a single HD3870 at any given load level, resulting in it being the most power hungry card we’ve ever tested when under full load.

Also present are all the new multimedia capabilities that were added to the HD 3870. So you get full acceleration of HD video playback and all the image enhancement techniques as well as HDCP compliant outputs so you can play protected HD content like commercial Blu-ray and HD-DVD discs on your computer.

The cores run slightly faster than on the single HD 3870, seeing an increase of 50MHz from 775MHz to 825MHz so even if Crossfire is having trouble, you should at least get an extra frame or two per second over a single card. Aside from that, though, it really is just what you’d expect from two R670s bolted together.

In contrast to the increase in core clock speed, ATI has swapped the faster GDDR4 (2.25GHz) memory of the HD 3870 and replaced it with slower GDDR3 (1.8GHz) for the X2. Although no reason was given for the switch, it’s reasonable to assume that memory bandwidth isn’t a limiting factor and using slower memory could save ATI some money on what is already an expensive card.

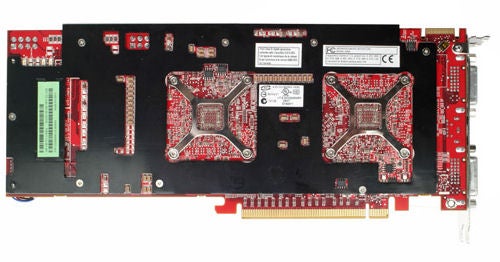

Taking a look at the PCB, we can see nestling inbetween the two graphics cores is an additional chip. This is a PCI-Express bridge that controls the communication between the two GPUs. This communication would normally be performed across the motherboards PCI-E bus, which is where all this who ha about numbers of PCI-E lanes on motherboards comes from – the more you have, the faster graphics cards can communicate. By keeping all this communication onboard, the X2 bypasses any limitations the motherboard may have.

Along the top edge of the PCB is a Crossfire connector that can be used to join two of these cards for quad Crossfire or couple an X2 with a regular HD 3870 for three-way Crossfire. Unfortunately, drivers for neither of these configurations are yet available so we’ll have to wait a while to see how this performs.

Output configuration is standard fair with two dual-link DVI sockets and TV-out available on the back plate. However, without wishing to steal our own thunder too much, the aforementioned partner card has included four DVI outputs so if quad monitor setups are your thing, variants on the HD 3870 X2 could be the thing to look for.

One strange difference between this card and all similarly large cards is the orientation of the two auxiliary power connectors on the top edge. Just as with the HD 2900 XT, the card requires two six-pin PCI-E power connectors to run properly, and for overclocking you should use one six-pin and one eight-pin connector. The odd thing about the reference connectors, though, is that, rather than facing the connectors upwards, where they’re easy to reach when installed in a case, ATI has chosen to mount them perpendicular to the PCB. In our experience this makes it more difficult to attach and remove the power cables, particularly if you’re using two of these cards at once.

Fortunately, once again the great unmentionable partner card (ok, it’s made by ASUS) shows that ATI’s board partners really are willing to tread a different path to that dictated by ATI as they’ve oriented the power connectors in the conventional way.

Trusted Score

Score in detail

-

Value 7

-

Features 9

-

Performance 8