Why the Pixel 4 shows Google is now playing camera catchup

Google’s Pixel phones have blazed a photography trail for smartphones, but their computational scythe has cleared a path for rivals – and the Pixel 4 shows that it’s no longer leading the smartphone camera pack.

Google’s Pixel 4 camera unveiling, led by Stanford computer science whizz Marc Levoy, was refreshingly free of hyperbole – and included a jab at Apple’s overblown description of its incoming ‘Deep Fusion’ tech, with Levoy stating: “This isn’t mad science, it’s just simple physics.”

But even taking into account Google’s modest marketing, and the Pixel 4’s £669 price tag, its camera is clearly playing catchup with recent rivals like the iPhone 11.

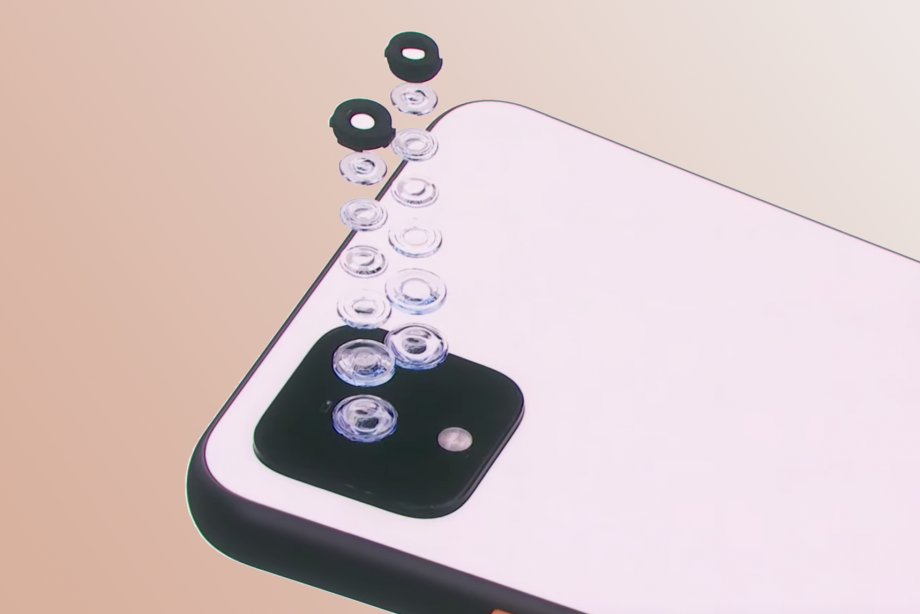

The reason for this is simple: Google has been so keen to push the limits of machine learning and computational photography, it’s overlooked the assistance good old-fashioned hardware can give to its flagship’s snapping skills.

It’s admitted as much with the introduction of a new 2x telephoto lens on the Pixel 4. This gives a much-needed optical boost to the Google’s digital Super Res Zoom mode.

![]()

What Google achieved with Super Res Zoom on the Pixel 3 was pretty incredible – the mode takes quick burst of 15 shots, using your hand-shake and optical image stabilisation to shift the lens around. But it still wasn’t as good as the optical zoom on even the iPhone XS, let alone the Huawei P30 Pro’s 5x zoom.

In fact, these rivals seem to have Google a little rattled, because in announcing the Pixel 4’s new lens it rather unnecessarily derided the idea of ultra wide-angle lenses, calling telephoto “more important”. That’s pretty debatable. A more likely explanation is that the latter is simply better-suited to its computational trickery. But at least you now have the option of wide-angle portrait pics from the front camera.

It’s not just in hardware that Google seems to be playing camera catchup either. Other new features that the Pixel 4 crowed about were Live HDR+ (seeing the Pixel’s HDR effects in the viewfinder in real-time, rather than after the fact) and simulated, lens-style bokeh effects. Both of these have been on the iPhone for a while.

The Pixel 4’s other new features are welcome, if relatively modest refinements of existing camera features too. Its Portrait Mode now calculates depth from both dual pixels and dual cameras, while Night Sight has a new astrophotography mode that combines 15 exposures with a single shutter press.

![]()

Naturally, that mode looks extremely impressive from the shot taken by Google’s astrophotography team. But it’s also an extremely niche use case – in fact, it’s quite likely that I wouldn’t find myself in a shooting situation like that before the Pixel 5 comes out.

Are we hitting a computational ceiling when it comes to phone cameras? Almost but not quite, as Google suggested with its teasing of an upcoming software update that will boost the Pixel 4’s dynamic range in Night Mode shots.

Still, as I’ve previously argued, it feels like Google’s camera talents could be more usefully targeted at the ways people actually use their phone cameras – that is, mostly taking visual notes and reminders, rather than National Geographic-worthy shots of the moon.

There’s no doubt the Pixel 4 is still shaping up to be one of the best phone cameras around. And with software, rather than hardware, still being the main driver of phone camera innovation, the PIxel 4 could yet surge past its rivals again. After all, Night Sight was a software update that came over a month after the release of the Pixel 3.

- Pixel 4 XL review: hands on

- These are the best camera phones

But, right now, the Pixel 4 shows that Google is no longer the only computational ‘mad scientist’ in town and that strong hardware is still the foundation that flagship phone cameras need to be built on.