iPhone 7 camera in-depth: Apple said you’ll love it and we think you actually might

What’s all this dual-camera talk about?

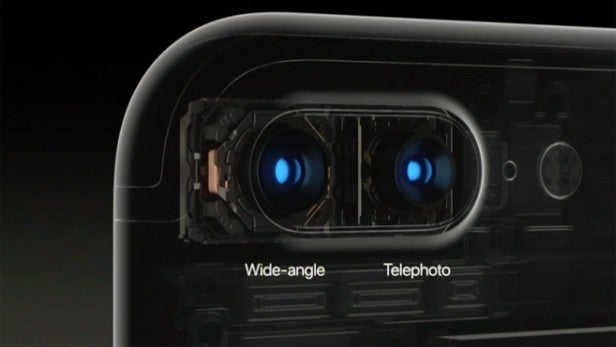

Every year Apple gets accused of making a boring iPhone upgrade. There’s nothing boring about the iPhone 7 Plus camera, though. It’s a dual-lens system, but isn’t anything like the dual-camera phones we’ve reviewed to date.

Here we take a bit of deep dive into each of the important part of the new camera and explain what Apple has done and why it’s really rather cool.

Watch: iPhone 7 vs iPhone 7 Plus

1. It has “lossless” zoom

The most important part of the iPhone 7 Plus camera is that it actually contains two cameras. Before the launch my bet was that Apple would use a colour sensor and a black-and-white one to improve low-light performance beyond both the capabilities of the iPhone 6S Plus and the Samsung Galaxy S7.

That’s not what the iPhone 7 Plus has, though.

It uses a 27mm lens and a 56mm one. So that’s a 1x-zoom lens and a 2x-zoom lens. This gives the phone the equivalent of a 2x optical zoom. That may not sound all that impressive, but it is in a phone only 7.3mm thick.

Such a dual-camera setup also separates the iPhone 7 Plus from other dual-sensor phones we’ve seen before, like the HTC One M8 and Huawei P9. This is something new.

Related: iPhone 7 Plus vs iPhone 7

When you use the iPhone 7 Plus, you’ll be able to select 1x or 2x views, or anything between 1x and 10x zoom. There’s a good reason to stick to the native 1x and 2x focal lengths if you care a lot about image quality, though.

Beyond 2x zoom, the iPhone 7 Plus reverts to standard digital zoom. This is like opening up an image in Photoshop, cropping it and expanding it. Terrible, in other words.

There’s also an issue using zoom settings between 1x and 2x zoom. It’s all down to what these images are made of. At, say 1.3x zoom, the scene is larger than the 2x-zoom lens can see, but small enough that the 1x lens can’t use its full resolution to render the image. The iPhone’s brain then has to squish together the image data from the parts of the scene each lens can ‘see’ and hope for the best.

The only focal lengths equivalent to a normal optical zoom are 1x and 2x. The range in between is made from software compositions. However, it’s exciting stuff.

Apple hasn’t come up with this tech itself. It acquired LinX in 2015, a company that’s been working on this tech for years, and outlined a very similar camera setup in 2014.

2. The lens is much faster

Even if you just use 1x-zoom photos 90% of the time, image quality should be significantly improved in low-light conditions. The iPhone 7 Plus has a new 6-element f/1.8 lens.

The older iPhone 6S Plus has a relatively slow f/2.2 lens, which had already been radically outstripped by rival Androids when the last generation was announced. The f-stop rating tells you how wide a lens’s aperture is.

The wider the lens aperture, the more light can get through to the sensor. Apple says 50% more light gets to the sensor this time around, which will improve low-light performance.

It’s great, although not the widest-aperture phone camera around. The Samsung Galaxy S7 has an f/1.7 lens. Of course, it only has the one rear sensor.

3. Optical stabilisation is in

A fast lens doesn’t mean much in the phone world unless it’s partnered with optical image stabilisation. Even larger phone sensors are tiny, and a fast lens isn’t enough to ensure good low-light performance.

OIS keeps the sensor steady so that even if you move a little bit while light is pouring in, your images will stay sharp. Night shots demand slower shutter speeds, meaning there’s more chance that little hand movements will change what the camera sees while it’s taking in light.

Last year, only the iPhone 6S Plus had OIS. This year both iPhone 7 models do.

There are a few questions left to answer here, though. First, we don’t know how hard Apple has pushed the optical image stabilisation. The Samsung Galaxy S7’s night-time image quality is only as good as it is because it’s willing to make the shutter very slow in order to avoid ramping up sensitivity, which is what makes your photos noisy.

The iPhone 6S Plus’s limit is around 1/9 of a second, even though a good OIS system can let a shutter slow down to 1/4 of a second and keep handheld photos sharp.

Our second question is whether the 2x-zoom lens is also stabilised. It seems highly unlikely that it will be.

More ‘zoomed in’ lenses take up more space, which is why some DSLR zoom lenses are absolutely huge. Looking at the iPhone 7 Plus’s camera housing, the bump isn’t huge. It’s highly likely that the added size of the 2x lens roughly matches up with the extra space taken up by the 1x lens’s OIS motor.

In other words, zoomed-in photos probably aren’t going to look as good in poor lighting. Sorry.

4. Apple hasn’t had to mess up the focal length

It is reassuring, though, that Apple hasn’t had to change the focal length of the new iPhone in order to accommodate its new style. The base focal length is still 27mm in standard camera terms, meaning its view will be the same as you’re used to in recent iPhones.

5. New ISP should be able to keep up

Apple is great at maintaining standards. Through the entire iPad series, it has obsessively stuck to offering at least 10 hours of battery life.

The iPhone 7 Plus’s new ISP suggests we’ll see the same excellent shooting performance seen in all recent-gen iPhones. One of the greatest, least talked-about, strengths of iPhone cameras is that they shoot quickly with almost zero shutter lag and tend to nail difficult things like white balance and exposure.

A new image signal processor (ISP) is destined to be one of the least-appreciated, most often-used parts of the new Apple A10 processor. This handles everything from noise reduction to exposure and white balance.

The zoom array hugely increases the pressure on image processing, particularly if you shoot between 1x and 2x zoom. With such shots, the iPhone 7 Plus has to merge the information from two sensors. It’s not a simple operation.

Apple’s Phil Schiller says it involves 100 billion operations, and claims it takes just 25 milliseconds. That’s fast enough to feel instant. We’ll see whether it stacks up in our review.

Buy Now: iPhone 7 at Amazon.com from $780

6. Its shallow depth of field doesn’t look terrible

One feature we may not see right from the on-sale day is something phones like the HTC One M8 use their dual-camera arrays for. By capturing a depth map of a scene, the iPhone 7 can create a shallow-depth-of-field effect, making your subject pop by blurring out the background.

Historically, this hasn’t worked well. Poor-quality depth maps and very simple blurring of backgrounds can make your shots look like they’ve been edited on Photoshop by a 14-year-old who only spent about five minutes on the job.

There is a sign that Apple’s take on the idea is going to be something special, though. At the iPhone 7 Plus launch, Apple showed off a few demo images using the algorithm, and it was the most convincing faux-bokeh effect I’ve seen from a phone.

The crucial difference is that the background doesn’t appear simply blurred. Light sources have a ‘bloom’ effect, which you can normally only get with a wide aperture lens and a much larger sensor than the iPhone 7 Plus has.

We’ll have to wait until later in 2016 to see whether this makes it into the final software, though.

This effect will be part of the Portrait mode in the iPhone camera app, and you’ll be able to see a preview of the effect before you shoot too. With this sort of feature, you normally have to apply it in post-production, which makes it enough of a pain for most people to forget about using it.

Apple has a chance of making this bokeh effect more than a gimmick. Hopefully it’ll pull it off.

Buy Now: iPhone 7 at Amazon.com from $780

Watch: Apple Watch Series 2 vs Series 1

Got a question about Apple’s new camera system? Let us know in the comments.