What is Smart HDR? Explaining Apple’s new camera tech

One of the most interesting aspects of the camera upgrade that Apple has given its new iPhone XS and XS Max is ‘Smart HDR’.

Of course, many smartphones are already able to capture ‘HDR’ (High Dynamic Range) photos, which combine multiple shots at various exposures to help retain detail in shadows and highlights that would otherwise be lost. So how does Apple’s photo-boosting system work, and is it any better than the HDR we’ve already seen?

Smart HDR – how it works

The key to ‘Smart HDR’ is Apple’s new A12 Bionic chip. As Phil Schiller said at Apple’s keynote “increasingly, what makes incredible photos possible aren’t just the sensor and the lens but the chip and the software that runs on it”. Cue Apple’s big new play in the world of ‘computational photography’, which is powered more by software and silicon than sensors.

Related: Apple Watch 4

According to Apple, the A12 chip’s new trick is the ability to marry the iPhone’s image signal processor (the thing that takes the info from your camera’s sensor and turns it into the image on the screen) with its software-based ‘neural engine’. In other words, ‘smart HDR’ isn’t just about pure processing grunt – it’s marrying that power with our phones’ increasing ability to automatically recognise what’s going on in a scene.

Related: iPhone XR

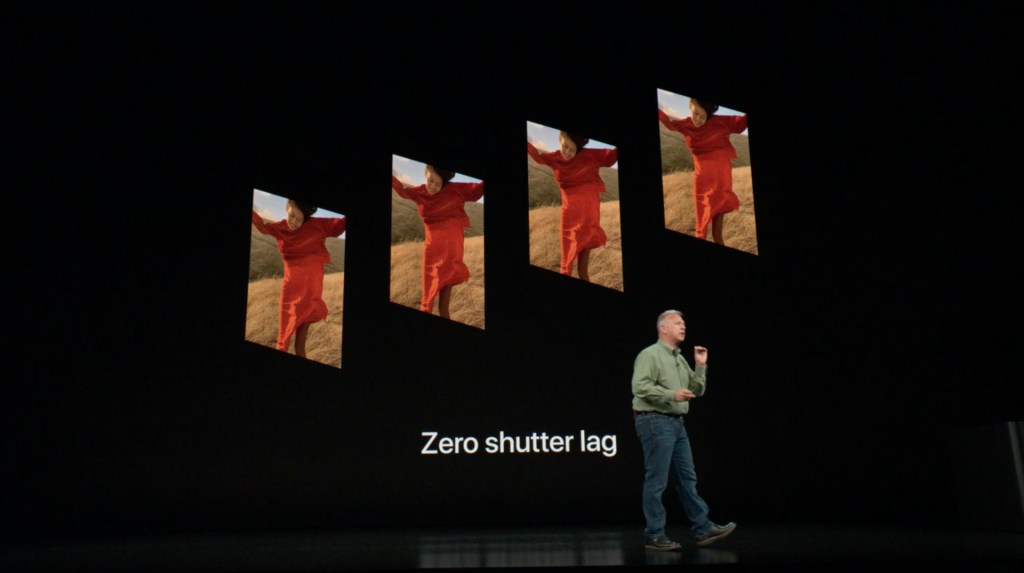

The example Apple gave was taking a photo of a subject who’s moving. In this case, ‘smart HDR’ helps in two ways. Firstly, the camera constantly shoots a four-frame buffer, so that there’s zero lag when you hit the shutter (in a similar way to Samsung’s ‘Live HDR’).

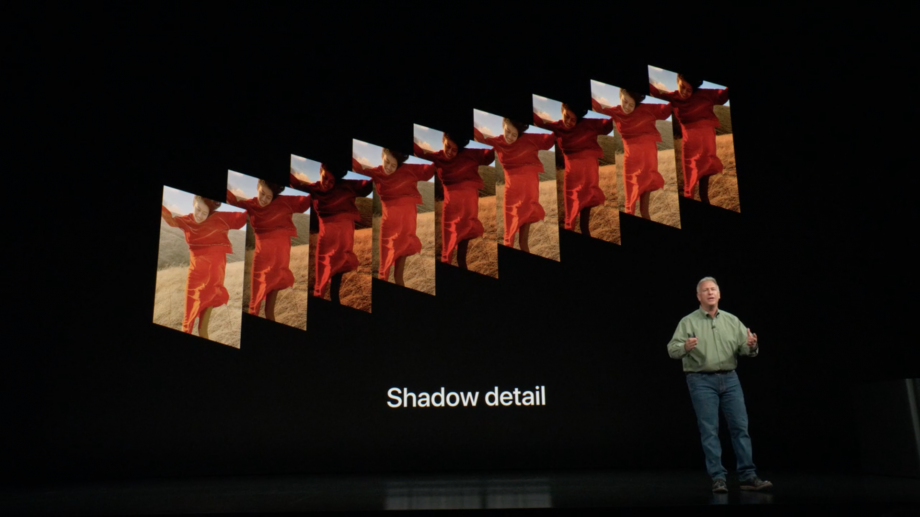

But in ‘smart HDR’ the camera also shoots ‘interframes’ between those frames at different exposures to help bring out things like highlight details and also a long exposure to get shadow details.

Rather than then simply combining all of those frames into one shot, the neural engine (similar to what Huawei calls its ‘Master A.I’) then chooses the best parts of each photo and merges them together. Hence ‘smart HDR’.

Related: iPhone XR vs iPhone XS vs iPhone XS Max

Smart HDR – is it a big deal?

Of course, we’ll have to try Apple’s ‘new HDR mode out for ourselves when we test iPhone XS and XS Max before they arrive in late September. Similar features like the ‘fake bokeh’ seen in many smartphone portrait modes haven’t always proven to be completely reliable. But it does sound like another step towards automating photographic features that were previously the preserve of camera nerds.

To achieve a similar effect, photographers have traditionally used the ‘bracketing’ mode on their cameras and them combined the shots together themselves in post-production. While there’s clearly still a place for the precision of human editing, it’s hard to say no to the idea of having a photo editor in your pocket that can not only do one trillion operations per shot (as Apple claims) but also has the smarts to make the right editing decisions. At least most of the time.