Google LaMDA lets you converse with a paper airplane

Google has announced LaMDA, a new natural language technology, which will enable Google Assistant users to have conversations with objects, planets and… paper airplanes.

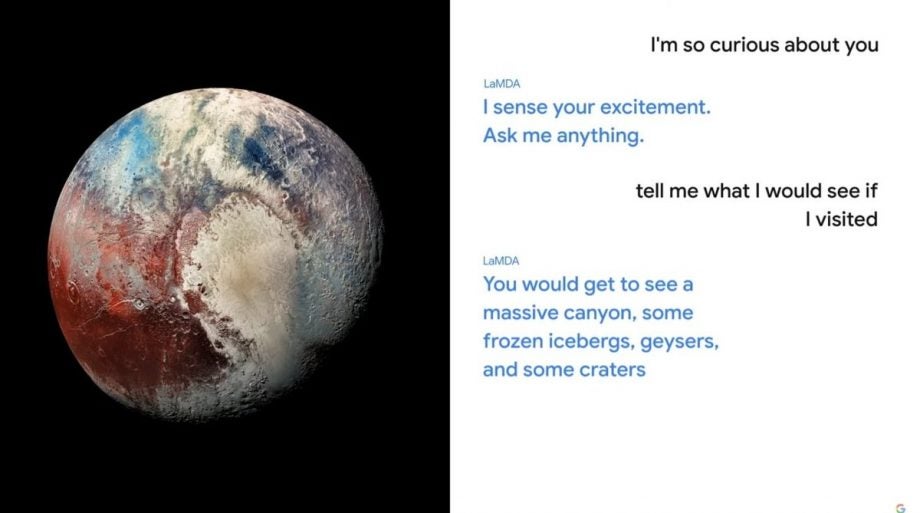

In a somewhat bizarre demonstration during the opening session of Google I/O, the company previewed a voice chat with the planet Pluto, which will enable Assistant users to learn more about the planet.

Users will be able to ask questions about the objects and, instead of a Wikipedia-style readout, the answer will come in conversational form with the subject of the conversation replying as if it had a personality of its own.

In one example, “Pluto” says: “I am not just a random ice ball, I am actually a beautiful planet.” When the Assistant user replies “well I think you’re beautiful,” the planet says “I am glad to hear that. I don’t get the recognition I deserve.”

So yeah. An interesting start to Google I/O for sure.

Google believes this tech will enable users to engage more with the Google Assistant rather than settle for the canned responses. “Sensible responses keep conversations going,” said Google CEO Sundar Pichai said of the tech, which is based on the company’s BERT model that finds the proper context in search queries.

So, for example, users will be able to ask questions like “Tell me what I would see if I visited” and the Google Assistant will able able to answer naturally, knowing the user is referring to Pluto.

Google says this tech will come to Assistant, Search and Workspaces in the future, but right now it is in the process of getting it ready for third-party developers to test.

There’s plenty more to come from Google I/O, but so far there isn’t much in the way of consumer features. We’re still awaiting the best of Android 12 and possibly a new version of WearOS to be revealed over the course of the multi-day event.