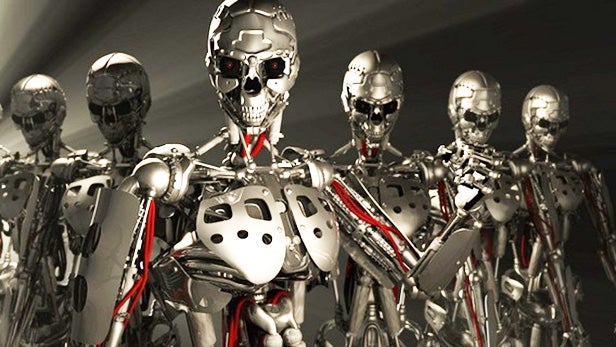

Elon Musk and Stephen Hawking call for ban on killer AI

Siri, Cortana, Google Now – they’ve always been kind to us. But the future of artificial intelligence might not be so good-natured.

That’s why the Future of Life Institute has just published an open letter warning about the dangers of militarised AI.

The letter, which calls for a ban on “offensive autonomous weapons”, has been signed by hundreds of leading experts, including Stephen Hawking, Elon Musk, Noam Chomsky, and Steve Wozniak.

It describes autonomous weapon systems as the “third revolution” in warfare, after gunpowder and nuclear arms.

The letter begins by clarifying what does, and does not constitute as an autonomous AI-powered weapon.

For instance, cruise missiles or remotely piloted drones where humans make targeting decisions aren’t the issue here, apparently.

However, it claims “armed quadcopters” that can search and kill targets that meet a “pre-defined criteria” are a big no-no.

The letter goes on to wax lyrical on the potential dangers that militarised autonomous weaponry could post to humanity.

“Unlike nuclear weapons, they require no costly or hard-to-obtain raw materials, so they will become ubiquitous and cheap for all significant military powers to mass-produce,” it reads.

The letter continues: “

The letter does, however, raise arguments for the use of autonomous weaponry on the battlefield.

For example, it’s suggested that by replacing human soldiers, casualties will inevitably be reduced.

However, the letter counters this with the argument that militant AI would lower the “threshold for going to battle”.

Related: 9 creepy ways robots are replacing humans

It ultimately concludes: “We therefore believe that a military AI arms race would not be beneficial for humanity. Starting a military AI arms race is a bad idea, and should be prevented by a ban on offensive autonomous weapons beyond meaningful human control.”

The FLI’s letter will be officially presented at the International Joint Conferences on Artificial Intelligence in Buenos Aires on July 28.

This isn’t the first time tech’s finest have spoken out about killer AI. Tesla boss Elon Musk compared AI development to “summoning he demon”, while Apple co-founder Steve Wozniak boldly claimed “computers are going to take over from humans, no question”.

This news article was brought to you by your future robot overlord. All hail PCBs.