Moore’s Law: What is it and why is it dying out?

Processor technology moves on apace, but did you know that the rate at which it has progressed was predicted more than 50 years ago?

That prediction came to be known as Moore’s Law. But there’s reason to believe that it no longer holds the relevance it used to, thanks to factors relating to the market and to the very laws of physics.

Here’s the lowdown on Moore’s Law, and why it could well be dead.

Moore Moore Moore

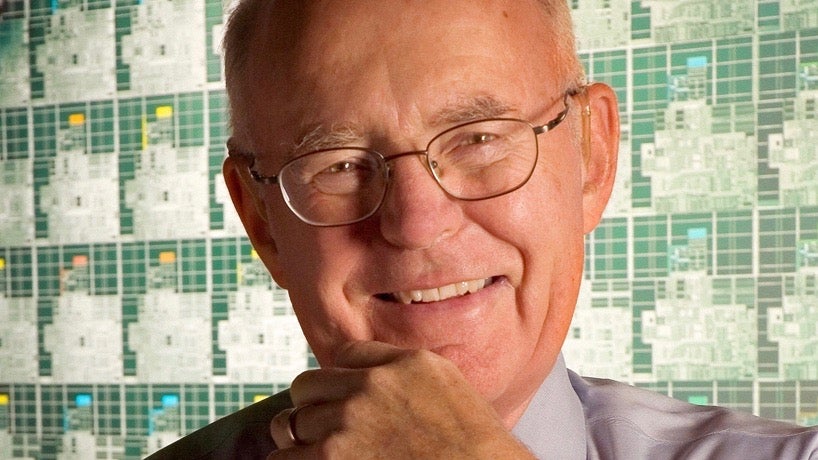

Gordon E. Moore is an American businessman, electrical engineer, and co-founder of Intel and Fairchild Semiconductor. Back in 1965 he wrote a paper that described the likely doubling of transistors in a processor – and thus the processor’s speed – every year for the next decade.

Ten years later, in 1975, Moore revised that prediction to a doubling every two years. Moore’s Law, as it came to be known, was used to guide industry planning for the next several decades.

It should be noted that despite its name, Moore’s Law is not actually a strict law. Rather, it was an observation, or a projection of how CPU technology would develop.

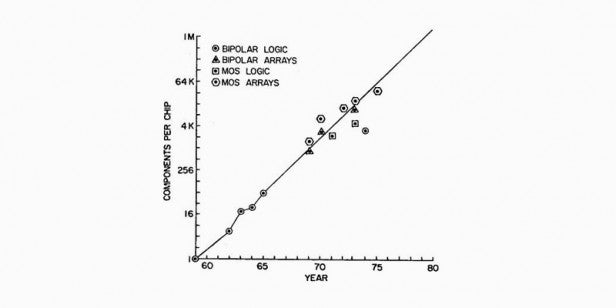

Remarkably, however, Moore’s prediction (doesn’t quite have the same ring, does it?) held largely true right up until some time around 2012.

Related: Best laptop 2016

The original Moore’s law, which applied from 1965-1975

Laying down a new law

Despite a good run, many feel that Moore’s Law is now effectively defunct.

Gordon Moore himself, in a 2015 interview with IEEE Spectrum, said that he could see “Moore’s Law dying here in the next decade or so.”

Intel’s progress and its comments on the matter seem to confirm as much. In July of 2015, Intel CEO Brian Krzanich told analysts that “The last two technology transitions have signalled that our cadence today is closer to two and a half years than two.”

Intel’s delayed jump from 22nm to 14nm (nm = nanometer) chips suggests as much, and Intel’s next move from 14nm to 10nm is looking set for a similar-sized gap. While Krzanich wouldn’t say whether this was a temporary blip or a new paradigm at the time, it looks increasingly likely that Moore’s Law has been broken.

So why has CPU technology progress slowed? Why does Moore’s Law no longer apply as it used to?

Let’s get physical

One reason Moore’s Law had to come a cropper eventually is simple physics. There’s only so small you can make traditional transistor technology, and scientists are finding it harder and harder to shrink the process further.

We’re now at the point, in current 14nm chips, where you can measure the width of transistors in mere atoms. At this scale, heat build-up (a processor’s worst enemy) becomes ever more problematic to the necessary flow of electrons.

As Tech2 points out, we’re also on the cusp of considering transistor sizes that will stray into quantum mechanics territory. Quantum mechanics is a little understood branch of physics that seemingly defies class laws of physics.

We won’t get into the ins and outs of quantum mechanics now – mainly because we don’t understand it – except to say that as things get really small (to the scale of atoms and photons), they start doing weird things. Which is hardly conducive to building precise instrumentation.

Less is Moore

Another reason Moore’s Law is slowing is the type of computing most of us now do. We’re less about traditional desktop PCs, where the power and space consumed aren’t issues, and more about those bleepy little things we keep in our pockets called smartphones.

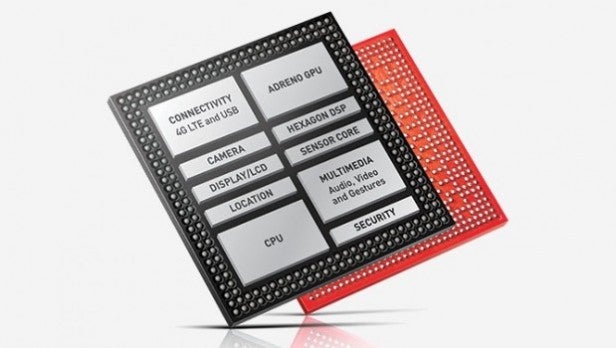

The take-off of smartphone technology has brought about the rise of a completely different sort of processor production. Power consumption and heat management are way more important in this kind of computing than they ever were before, but such processors still need to be ultra-responsive.

Intel, which has dictated the traditional computer CPU market almost from the get-go, doesn’t have much of a stake in this new market. Companies like Qualcomm and Samsung do.

The solution to those unique smartphone CPU requirements has been to adopt multiple low-power cores, and to supplement these with dedicated co-processors for things like motion sensing and graphics.

Even in the traditional computer space, most people use laptops these days, which have their own requirements for efficiency over raw speed. The result of all this has been much more incremental increases in performance for recent generations of CPU.

Even if processer manufacturers could keep pace with Moore’s Law, then, there probably wouldn’t be the same call to do so.