Trusted Reviews is supported by its audience. If you purchase through links on our site, we may earn a commission. Learn more.

Computing Reviews

Check out all our latest computing reviews and previews, covering everything from top notch laptops to powerhouse PC components and networking tech. Every item with a score has been tested by one of our computing experts.

-

-

-

-

-

A premium yet affordable graphical crackerjack

-

-

-

-

-

-

Crucial P5 Plus Review

Score

An average budget SSD for PS5 expansion but nothing more

-

-

-

Logitech's first 60% keyboard is a solid start

-

Beautiful design and beastly performance

-

-

Razer's latest and greatest keyboard is a real winner

-

-

Philips 27B1U7903 Review

Score

A work monitor with superb HDR performance and Thunderbolt 4

-

-

-

-

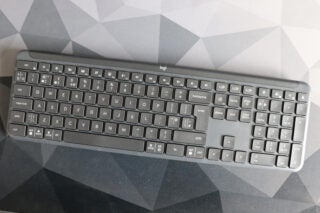

A respectable, reasonably-priced office keyboard.

-

A powerhouse laptop with a superb 18-inch display

-

Extended battery life for an expensive price

-

-

One of the top options for PC or PS5 gamers.

-

-

An excitingly-designed and compotent gaming mouse

-

Pricey first gen PCIe 5.0 doesn’t quite keep up

Page 1 of 176